Enterprise teams usually arrive at software development consulting services when they're stuck between two deadlines. One is internal: modernize the platform, improve the customer experience, and make the app easier to change. The other is external: add AI capabilities before competitors turn them into table stakes.

That pressure creates a familiar mess. Legacy systems still run critical workflows. Product teams want faster release cycles. Compliance teams want tighter controls. Executives want to know which investments will move the business forward instead of producing another pilot that never reaches production.

Good consulting helps you sort that out before money gets burned in the wrong place. It gives you a way to decide what should be rebuilt, what should be integrated, what should be left alone for now, and where AI belongs in the stack. It also forces the harder conversations that many projects avoid: ownership, governance, architecture boundaries, and operating costs after launch.

The Modern imperative for Software Development Consulting

Software development consulting services aren't just about bringing in extra developers anymore. In enterprise settings, they function more like a decision layer between business ambition and technical reality.

The market signals how central that role has become. The software consulting market was valued at USD 327.59 billion in 2025 and is projected to grow to USD 380.26 billion in 2026, while application development and modernization accounted for 37.72% of services in 2025, according to Mordor Intelligence's software consulting market analysis. That matters because enterprise buyers aren't looking for slide decks. They need practical modernization that ships.

Why the old consulting model falls short

The outdated model treated consulting as a front-end advisory exercise. A firm assessed the problem, delivered recommendations, and left the client to wrestle the roadmap into reality.

That doesn't work well when you're modernizing a commerce platform, rebuilding a claims workflow, or embedding AI into a customer support product. The hard part isn't only selecting a stack. It's making architecture, product, design, data, and operations move together without breaking the business.

A modern consulting partner should help with things like:

- Legacy decision-making: identifying whether a system needs refactoring, integration middleware, data migration, or replacement

- Delivery design: choosing the right team structure and governance model for the work

- AI operations: setting rules for prompts, model changes, logging, spending visibility, and safe access to internal systems

- Scale planning: ensuring the app can support future usage, new channels, and evolving business workflows

Modernization fails when teams treat AI like a feature request instead of an operating model change.

That shift is why administrative tooling now belongs inside the consulting conversation. If you're adding AI into desktop or mobile applications, your team needs control points, not just APIs. Prompt versioning, model logging, parameter controls, and spend visibility are becoming part of the delivery baseline.

Some organizations also need a parallel staffing answer while strategy is taking shape. If you're trying to accelerate hiring without slowing execution, a useful reference point is how teams scale your development team with TekRecruiter while keeping consulting and delivery aligned.

Decoding the Core Benefits for Your Business

The strongest reason to buy software development consulting services isn't access to coders. It's access to better decisions.

Large organizations have already been pushing the market that way. Large enterprises accounted for over 63% of software consulting revenue in 2024, and BFSI generated over 21% of revenue, based on Precedence Research's software consulting market outlook. That tells you where demand is most intense: environments with regulated workflows, expensive mistakes, and little tolerance for architectural shortcuts.

Speed without organizational sprawl

Consulting helps when your internal team is capable but overloaded. Product leaders still need to prioritize customer-facing work. Platform teams still need to maintain production systems. Security and compliance still need to review changes.

An experienced consulting team removes friction in three places:

- Decision speed: architects and product leads don't spend months debating stack options or integration patterns.

- Execution focus: internal staff can keep core operations running instead of being pulled into every experimental initiative.

- Delivery acceleration: design, engineering, QA, and release planning can move in parallel when ownership is clear.

That doesn't mean "faster" at any cost. It means fewer stalled approvals, fewer vague requirements, and less confusion about what gets built first.

Risk reduction that starts before coding

Risk in enterprise software rarely starts with a bug. It starts with the wrong assumptions.

Consultants reduce that risk by pressure-testing scope, dependencies, interfaces, rollout plans, and non-functional requirements early. In regulated industries, that discipline matters as much as engineering quality. A fintech product can be feature-rich and still fail if auditability is weak. A healthcare workflow can be elegant and still create operational pain if integrations are brittle.

Practical rule: If a partner talks mostly about velocity and barely talks about governance, you're probably buying rework.

Architecture and operating model come together at this intersection. The right firm won't just ask what you want to build. They'll ask who owns decisions, who approves releases, how data moves, and what happens when the first version meets real users.

For a closer look at how enterprise teams structure that work, this guide to enterprise software development services is a useful companion.

Strategic innovation without the hype

Most internal teams don't have the time to test every new framework, cloud pattern, or AI integration approach. They shouldn't have to.

A consulting partner brings pattern recognition from multiple products and industries. That helps you avoid expensive detours, especially when teams are tempted by shiny tools. Not every workflow needs a large language model. Not every legacy system needs to be replaced. Not every mobile app needs a full rebuild to support better UX.

What works is selective modernization. Solve the bottleneck that matters, then build an operating model your team can sustain.

Choosing Your Engagement Model A Critical Decision

Many software projects fail for organizational reasons, not technical ones. Weak requirements, poor stakeholder alignment, and fuzzy ownership create more damage than most framework choices ever will. That's why the engagement model matters so much, as noted in Diceus on consulting and delivery bottlenecks.

A buyer who chooses the wrong model usually feels the pain in one of two ways. Either the consultants wait around for decisions the client never organized, or the client discovers too late that nobody owns the outcome.

The three models most enterprises consider

Staff augmentation works when your internal team already has product direction, technical leadership, and delivery management in place. You add specialized people into an existing machine.

Managed projects fit when you need a partner to own a defined scope with clear deliverables, budget boundaries, and delivery accountability.

Dedicated product teams make sense when the software is strategic, evolving, and likely to need ongoing iteration across product, design, engineering, and QA.

Here's the simplest side-by-side view.

| Attribute | Staff Augmentation | Managed Projects | Dedicated Product Team |

|---|---|---|---|

| Primary purpose | Fill skill or capacity gaps | Deliver a defined initiative | Own and evolve a product over time |

| Client involvement | High | Medium to high at key review points | Shared and ongoing |

| Control over day-to-day work | Mostly client-side | Mostly partner-side within agreed scope | Joint ownership |

| Best for | Teams with strong internal leadership | Projects with a clear start and finish | Products that need continuous improvement |

| Speed to start | Usually fast if role definitions are clear | Moderate because scope and governance must be set | Moderate because team shape and roadmap need alignment |

| Main risk | Added people, same old bottlenecks | Scope tension if requirements are weak | Drift if priorities and KPIs aren't actively managed |

What usually works and what usually doesn't

Staff augmentation works well when the client already knows how to run product delivery. It fails when leaders hope outside engineers will fix internal confusion. Extra hands don't solve broken prioritization.

Managed projects work well for platform rebuilds, mobile app launches, system integrations, and modernization efforts with clear outcomes. They struggle when the client can't commit to decisions or keeps major requirements fluid without adjusting scope and governance.

Dedicated product teams work best when the software is central to revenue, operations, or customer experience. They create continuity. They also demand executive discipline. If leadership can't provide a roadmap, escalation path, and clear product ownership, the team will stay busy without creating much impact.

Questions that clarify the right fit

Use these questions before you choose a model:

- Do we have strong internal product ownership? If not, augmentation probably won't save the project.

- Is the scope stable enough to define success? If yes, a managed project may be the cleanest path.

- Will this product need continuous releases after launch? If yes, a dedicated team is often more efficient than restarting with new vendors every quarter.

- Where is the actual bottleneck? Engineering capacity, decision-making, design, compliance review, or integration complexity?

If you're weighing those trade-offs, this comparison of staff augmentation vs managed services is worth reviewing before procurement starts.

Choose the model that fixes your constraint. Don't choose the model that sounds most flexible in a pitch deck.

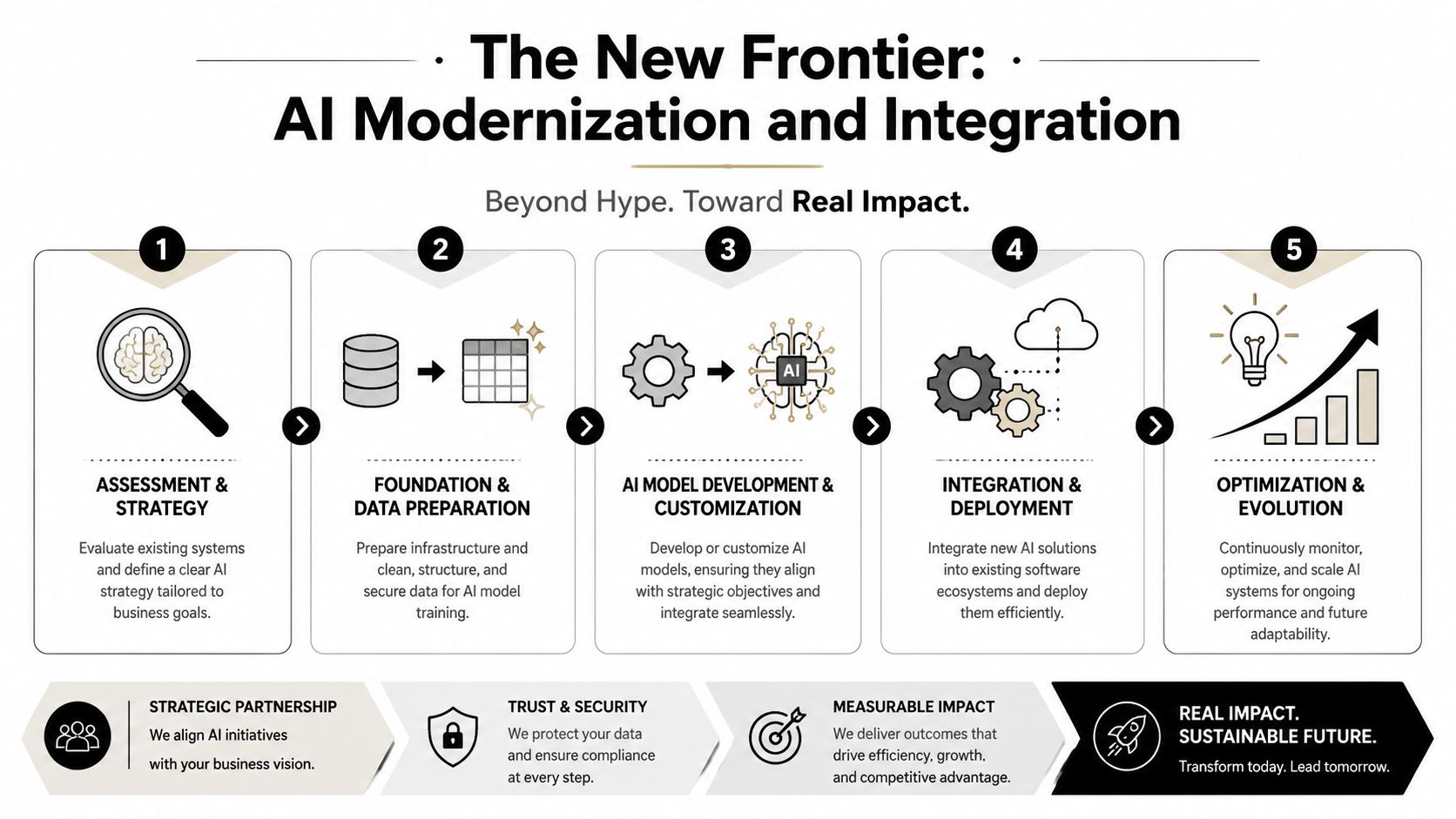

The New Frontier AI Modernization and Integration

AI projects expose weak architecture faster than almost anything else. Teams can demo a chatbot in days, then spend months discovering that prompt behavior is inconsistent, internal data access is risky, logs are incomplete, and nobody can explain the monthly model bill.

That production gap is now common. McKinsey reported in 2025 that 78% of organizations use AI in at least one business function, yet many still struggle to move pilots into production reliably, a challenge discussed in 10Pearls' analysis of AI consulting and delivery needs.

Retrofitting AI into real enterprise systems

AI modernization usually isn't greenfield work. More often, you're adding intelligence to an existing app, workflow, or data environment.

That changes the consulting job. The team has to evaluate:

- Where AI belongs in the workflow: user-facing assistant, recommendation engine, anomaly detection, internal operations aid, or back-office automation

- How legacy systems will connect: direct API calls, middleware, event-driven integration, or staged data pipelines

- What data can be exposed safely: retrieval boundaries, role-based access, audit logging, and compliance constraints

- How humans stay in the loop: approval steps, escalation paths, override controls, and fallback behavior

The technical pattern matters, but so does the user experience. A weak AI integration often fails because it interrupts the workflow instead of improving it. A strong one feels like a useful extension of the product, not a separate science experiment.

For teams planning upgrades in parallel, this overview of an application modernization roadmap can help frame sequencing decisions.

The operational layer most firms skip

Enterprise buyers need to push harder here. Many vendors talk about model selection and implementation. Far fewer explain how the system will be run after launch.

A production-ready AI consulting engagement should address at least these operational controls:

Prompt governance

Prompts change behavior. If they're scattered across code, spreadsheets, and chat threads, your team can't manage risk. They need version control, review discipline, and rollback paths.Parameter management

AI features often depend on retrieval settings, model options, internal data mappings, and workflow-specific variables. Those settings shouldn't live as mystery constants buried across environments.Cross-model logging

If your app calls more than one model or provider, you need consistent observability across all of them. Otherwise incident review becomes guesswork.Cost visibility AI spending can drift when prompts expand, traffic rises, or teams add more use cases. Finance and product leaders need a practical way to monitor cumulative usage and spending.

If a consulting plan stops at "we integrated the model," the hard part hasn't started yet.

A solid external perspective on this challenge is implementing AI solutions with TrainsetAI, especially for teams trying to turn experiments into operating systems.

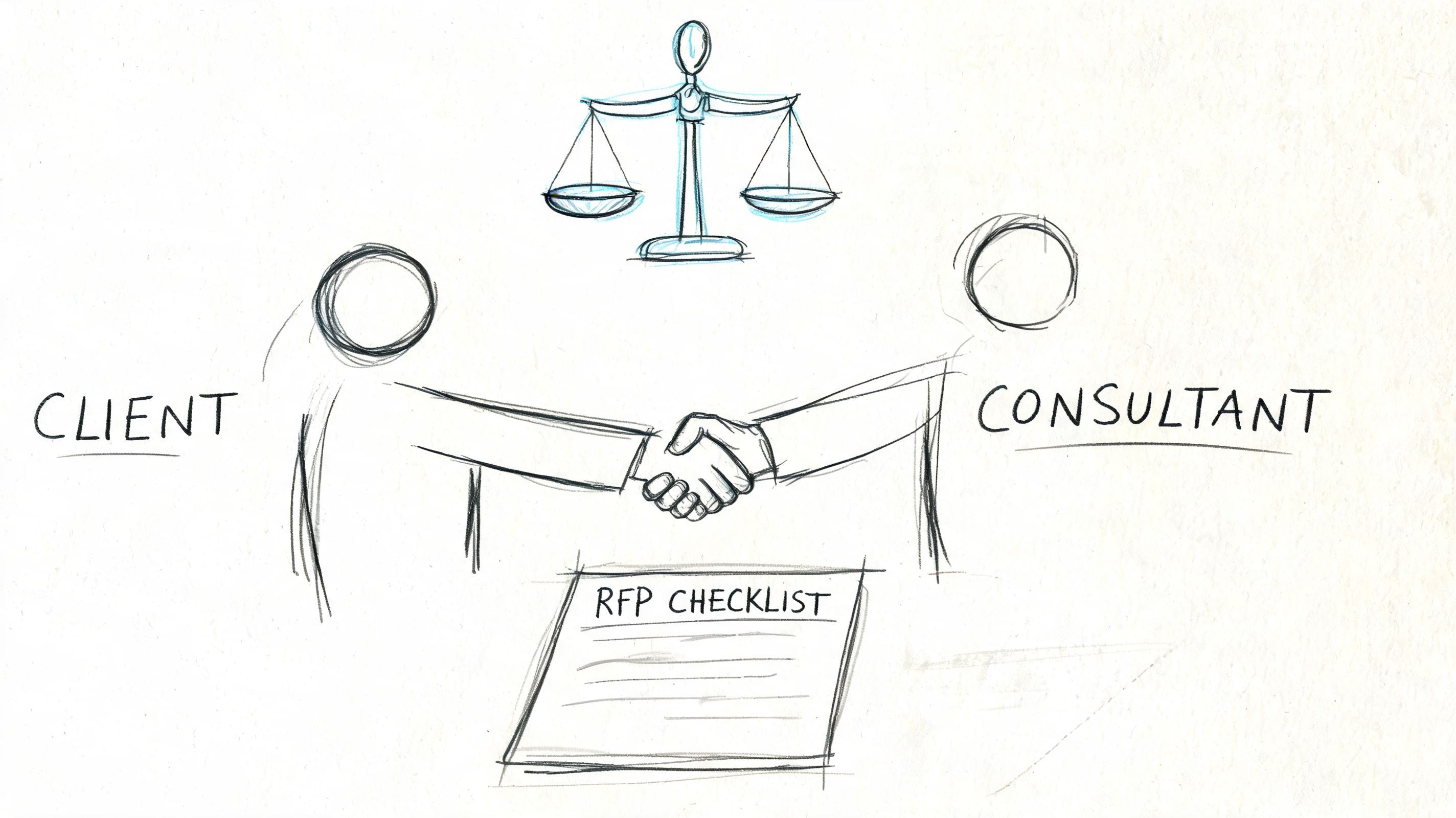

How to Select the Right Consulting Partner An RFP Checklist

Procurement teams often write RFPs that ask about languages, rates, and project history, then wonder why responses all sound the same. The better approach is to screen for how a consulting partner thinks before they build.

A serious engagement starts with discovery. A high-value consulting process begins by mapping business goals to software architecture before coding, which reduces rework and helps align stack, integrations, and cloud decisions with long-term reliability and cost efficiency, as described by SDSol's consulting services guide.

What to ask before you shortlist anyone

A strong RFP should force the partner to show judgment, not just availability. Ask them how they'd approach your constraints, not only whether they support your stack.

Use this checklist.

Request architecture thinking, not only credentials

Ask how the team would assess your current system, dependencies, and likely modernization path. A credible partner should discuss trade-offs, not jump straight to implementation.Test industry fluency

If you're in fintech, healthcare, ecommerce, or government, ask how they handle approval workflows, audit expectations, data sensitivity, and operational handoffs. Generic answers are a warning sign.Review delivery mechanics

You want to know how decisions are made, how risks are surfaced, how sprint reviews work, and how blockers get escalated. Strong communication beats impressive sales language.Ask for knowledge transfer details

Good partners leave your internal team stronger. Require documentation expectations, training plans, and post-launch transition support.Clarify support for AI governance

If AI is in scope, ask how they handle prompt changes, logging, internal data permissions, and usage monitoring after release.

Risk checks that belong in every enterprise review

Third-party risk review shouldn't happen at the end. It should happen while you're still evaluating fit.

A useful reference for stakeholders involved in security and procurement is this CIO's guide to TPRM, especially when the software partner will touch sensitive systems, internal data, or production infrastructure.

The best RFP responses don't promise everything. They identify constraints early and explain how they'd manage them.

A practical scoring lens

When enterprise buyers compare finalists, these criteria usually matter more than slide polish:

| Evaluation area | What good looks like |

|---|---|

| Discovery quality | They ask sharp questions about business goals, architecture, dependencies, and success metrics |

| Engineering depth | They can explain how they'll build and operate the solution, not just advise on it |

| Governance maturity | They define ownership, communication cadence, documentation, and escalation paths |

| Domain fit | They understand the operational realities of your industry |

| Post-launch readiness | They plan for support, handoff, maintenance, and iteration |

From Onboarding to ROI What Success Looks Like

The contract isn't the hard part. The first six weeks usually tell you whether the engagement will create a strategic advantage or consume management attention.

Strong onboarding creates operating clarity early. Everyone should know who owns backlog decisions, who approves architecture changes, how release readiness is judged, and what gets measured. Without that, teams produce motion instead of progress.

What effective onboarding includes

Most enterprise engagements need a small set of working agreements in place immediately:

- Role definitions: product owner, technical lead, design authority, QA ownership, security reviewers, and executive sponsor

- Meeting cadence: weekly delivery reviews, working sessions for blockers, and a clear escalation path for decisions

- Artifacts: architecture diagrams, backlog structure, acceptance criteria, release checklist, and risk log

- Success metrics: not just delivery status, but business outcomes tied to the software

The business metrics should match the use case. For an ecommerce app, that may be stronger conversion flows, better merchandising support, or fewer support tickets tied to broken account experiences. For internal operations, it may be shorter processing cycles, fewer manual handoffs, or better visibility into exceptions.

How AI ROI gets lost

AI initiatives usually lose ROI in one of three ways. Teams overbuild before validating the workflow. They ship without governance and spend time cleaning up unpredictability. Or they can't separate useful usage from expensive noise.

That operational gap is why administrative controls matter. One practical option is the Wonderment Apps prompt management system, which teams can plug into an existing application to support AI modernization. It includes a prompt vault with versioning, a parameter manager for internal database access, a logging system across integrated AIs, and a cost manager that shows cumulative spend.

Those features matter because they answer very concrete post-launch questions:

- Which prompt version is live right now?

- Who changed a model parameter and why?

- What happened across providers during a failed interaction?

- Are costs rising because of traffic, prompt design, or poor workflow boundaries?

AI ROI improves when teams manage prompts, parameters, logs, and spending as operating assets, not hidden implementation details.

What mature partnerships do differently

The best consulting relationships don't end at deployment. They shift into a lighter but disciplined rhythm of optimization.

That usually includes:

- Reviewing usage patterns to identify where users adopt the feature, abandon it, or misuse it.

- Tuning workflows so AI is inserted where it reduces friction instead of adding another decision point.

- Updating controls as new models, policies, or compliance requirements affect the product.

- Transferring knowledge so internal teams can own more of the system over time.

That's what ROI looks like in practice. Not a flashy launch. A system your team can operate, improve, and justify.

Your Next Steps in Digital Transformation

Most enterprise software problems don't come from a lack of effort. They come from trying to modernize without making a few hard decisions first.

You need to know what kind of partner you need. You need a realistic view of whether the main bottleneck is architecture, delivery ownership, AI governance, or internal alignment. And you need a plan for operating what gets built after launch, especially if AI is part of the roadmap.

Start with three actions.

Assess the work objectively Separate capacity problems from decision problems. If your team lacks specialized skills, augmentation may help. If ownership and cross-functional coordination are the issue, you likely need a managed engagement or dedicated team.

Draft requirements around outcomes

Build your RFP around business goals, operational constraints, security needs, and post-launch ownership. Don't center it only on a stack checklist.Review your AI operating model

If you're adding copilots, recommendations, anomaly detection, or workflow automation, decide now how prompts, logs, parameters, and spend will be managed. That work shouldn't wait until after release.

Software development consulting services create value when they reduce uncertainty and improve execution at the same time. That's the standard worth holding. The right partner helps you modernize the platform, shape the delivery model, and give your team more control over what happens after go-live.

If you're evaluating a modernization effort, planning an enterprise app build, or trying to make AI features operational instead of experimental, Wonderment Apps is worth a look. A practical next step is to schedule a strategy conversation and request a demo of the prompt management system so your team can see how prompt versioning, parameter controls, cross-AI logging, and cumulative cost tracking would fit into your application environment.