Your team probably already has this conversation on repeat. Product wants recommendations, fraud scoring, demand forecasting, or smarter search. Engineering looks at the existing Java stack and asks whether machine learning belongs inside it, or whether the company now needs a separate Python island with its own deployment model, monitoring tools, and handoff friction.

For a lot of businesses, that split creates more problems than it solves. The hard part usually isn't training a model once. It's fitting that model into the application people already depend on, then keeping it fast, observable, and maintainable as requirements change. That's where machine learning in java becomes less of a niche choice and more of a practical architecture decision.

Why Keep Machine Learning Inside the JVM

A Java-first company doesn't need to apologize for wanting to stay Java-first. If your core platform already runs on Spring Boot, Kafka, Hibernate, and a mature CI/CD pipeline, pushing machine learning into a separate runtime often adds avoidable complexity. You introduce another deployment surface, another set of package issues, another observability layer, and another staffing dependency.

That doesn't mean Python has no place. It often does. But production systems care about more than notebooks and experiments. They care about predictable latency, security review, operational ownership, and whether the team on call can debug the thing at 2 a.m.

The practical upside

The JVM gives Java teams a few advantages that matter in production:

- Operational consistency. Your model-serving code can use the same build process, deployment workflow, secrets handling, and service conventions as the rest of your application.

- Tighter integration. Calling local Java code is simpler than crossing process boundaries every time a request needs a prediction.

- Clear ownership. Backend engineers don't have to treat model inference as a black box run by another team with different tooling.

A lot of organizations compare languages as if they're only choosing syntax. They aren't. They're choosing an operating model.

One of the cleaner ways to frame that trade-off is to compare the whole ecosystem around each option, not just the training libraries. Wonderment's look at what language works best for machine learning is useful for that reason. The question isn't "Can Java do ML?" It can. The actual question is whether Java fits your delivery model better than a split-stack setup.

Practical rule: If the prediction has to happen inside an existing transaction, request lifecycle, or event pipeline, keeping inference in the JVM usually makes the system easier to own.

Where Java fits best

Java is strongest when the business already runs on enterprise services and needs ML as part of those services, not as a separate research environment. Typical examples include:

| Scenario | Why JVM-native helps |

|---|---|

| Fintech scoring | Fits existing service boundaries, audit logging, and low-latency APIs |

| Ecommerce personalization | Works well when recommendations sit close to catalog, pricing, and session services |

| SaaS workflow automation | Keeps business rules and model inference in one maintainable codebase |

| Healthcare operations | Supports stronger control over deployment, access, and integration patterns |

The hidden win is team cohesion. Java developers can add model inference, validation, monitoring hooks, and fallback logic without waiting on another stack to catch up. That's usually faster for the business than chasing a theoretically perfect tooling ecosystem.

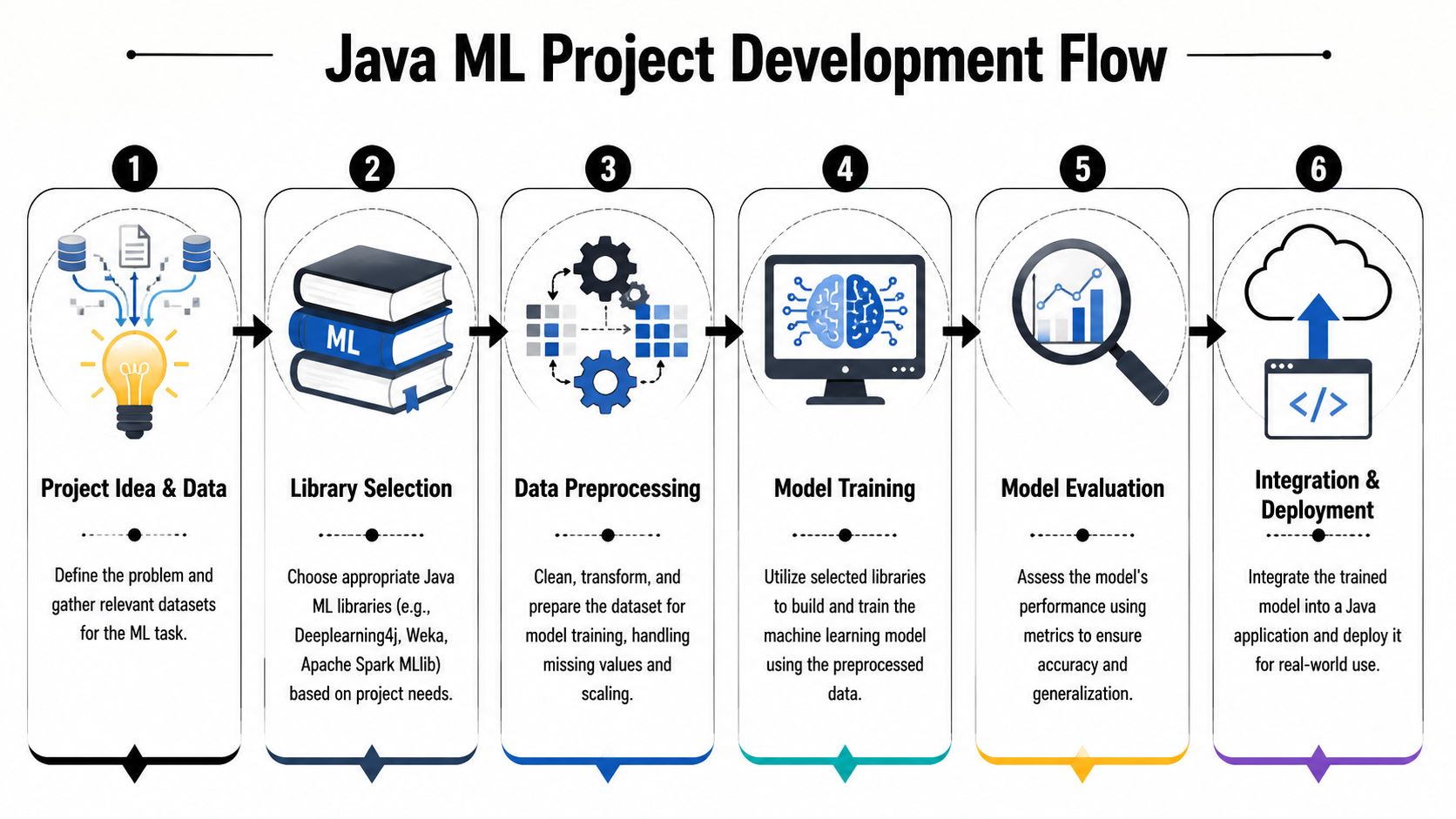

Setting Up Your Java Machine Learning Environment

A solid Java ML environment starts with boring decisions done well. Pick a supported JDK, lock dependency versions, and make training code reproducible from day one. If your setup is casual, your results will be casual too.

Java has earned that discipline. It has been a core platform for large-scale machine learning for decades, with Weka emerging in the mid-1990s, and a 2011 survey indicated that over 40% of data mining curricula referenced Weka as a practical tool, which says a lot about its long-standing role in the field (Weka history and adoption overview).

Build choices that won't annoy you later

Maven is the safer default if the organization already standardizes on it. Dependency resolution is predictable, onboarding is easier, and enterprise build pipelines usually already understand it.

Gradle is a good fit when you want more control over multi-module builds, custom tasks, or mixed projects that package training, batch jobs, and inference services differently. It can be cleaner for larger platforms, but it also gives teams more rope to tangle themselves with.

A practical baseline looks like this:

- Use a modern JDK. Pick the version your platform team already supports broadly.

- Split modules by concern. Keep data preparation, training code, and inference service code separate if the project is likely to grow.

- Pin model artifacts. Treat trained models like release assets, not files somebody dropped into a resources folder.

- Add tests around feature transforms. Most Java ML bugs show up before the model ever predicts anything useful.

Choose libraries by workload

Don't start with "What's the best Java ML library?" Start with "What kind of problem are we solving?"

- Weka works well for classical machine learning, teaching, and quick experimentation on structured datasets.

- Smile is a practical choice for teams that want a programmatic Java API for tabular data tasks.

- Tribuo is worth considering when you want strong structure around model training and evaluation.

- Deeplearning4j fits teams that need JVM-native deep learning integration.

- Spark MLlib belongs in the conversation when the training job is distributed and already close to a Spark data platform.

If you're planning image-heavy workloads or larger neural models, infrastructure matters as much as APIs. This guide to enterprise-grade GPUs for developers is a useful reality check before anyone promises ambitious training timelines on the wrong hardware.

Clean setup beats clever setup. A Java ML project with plain dependency management, versioned artifacts, and explicit preprocessing code will outlast a flashy prototype every time.

Building with Key Java ML Libraries

The fastest way to understand machine learning in java is to build two very different things. One should solve a classic business problem on tabular data. The other should show how Java handles a model that already exists and needs to be integrated into an application.

Java's production credentials are well established here. In the 2010s, Apache Spark MLlib and Deeplearning4j helped push Java into production-grade ML infrastructure. By 2016, Spark was reported in production use by over 1,000 enterprises, and Deeplearning4j had become one of the leading Java deep learning frameworks by 2018 (Java ML ecosystem milestones).

Example one with churn prediction

Suppose an ecommerce platform wants to predict whether a merchant account is likely to churn. This is classic tabular ML. The feature set is structured. The output is a classification. The business need is immediate.

Using a library like Smile, the workflow is usually straightforward:

double[][] x = loadFeatureMatrix();

int[] y = loadLabels();

var model = smile.classification.RandomForest.fit(x, y);

double[] candidate = loadSingleCustomerFeatures();

int prediction = model.predict(candidate);

The code is short, but the important part isn't the call to fit(). It's what happens before that.

What matters more than the model call

The team needs to define feature construction carefully:

- Usage features such as login frequency, ticket volume, or order consistency.

- Commercial features like plan type, discount history, or payment failures.

- Support signals including unresolved issues or delayed onboarding milestones.

If those features are assembled one way during training and another way during inference, the model won't fail loudly. It will just make bad predictions with great confidence. That's a common enterprise mistake.

A safer pattern is to build a single Java feature pipeline that both training and inference use. Keep encoding, normalization, null handling, and category mapping inside shared code. Don't let the batch job and the API invent their own versions of the truth.

The best churn model in the world won't help if your live service encodes "enterprise" differently from your training job.

Example two with image or product tagging

Now take a different problem. A retailer wants to auto-tag catalog images so downstream systems can improve search and merchandising. In this case, the smartest move often isn't training from scratch. It's loading a pre-trained model and integrating it cleanly.

That is where Deeplearning4j becomes useful in a JVM-native environment. A Java service can load a model artifact, preprocess the image, run inference, and return tags to the rest of the application without crossing into another runtime.

A simplified sketch looks like this:

ComputationGraph model = ModelSerializer.restoreComputationGraph("product-tagger.zip");

INDArray imageTensor = imagePreprocessor.preprocess(imageBytes);

INDArray[] outputs = model.output(imageTensor);

List<String> tags = decodePredictions(outputs[0]);

That pattern is common in enterprise systems because it avoids retraining complexity when the main value is in integration, not model research.

Library fit by project type

| Project type | Good Java-first options |

|---|---|

| Churn, fraud, propensity, scoring | Smile, Tribuo, Weka |

| Distributed feature processing and training | Spark MLlib |

| Deep learning inside JVM services | Deeplearning4j |

| Legacy academic or exploratory workflows | Weka |

Spark deserves special mention when the data already lives in a distributed processing environment. If your team is doing recommendation, segmentation, or large-scale event analysis, the surrounding platform matters as much as the algorithm. For that side of the stack, Wonderment's write-up on Scala, Hadoop, and Spark in enterprise systems gives useful context on where JVM-based big data workflows still shine.

What works and what doesn't

What works:

- Shared preprocessing code between training and inference.

- Narrow use cases with clear business actions attached to predictions.

- Model wrappers that expose simple interfaces to the rest of the Java application.

What doesn't:

- Treating libraries as interchangeable. Weka, Smile, Spark MLlib, and DL4J solve different problems.

- Starting with deep learning by default when a tree-based model on structured data would be easier to deploy and explain.

- Letting data science outputs arrive as mystery files with no versioning, no schema expectations, and no ownership.

The JVM is strong when the engineering discipline around the model is strong. That's the pattern to copy.

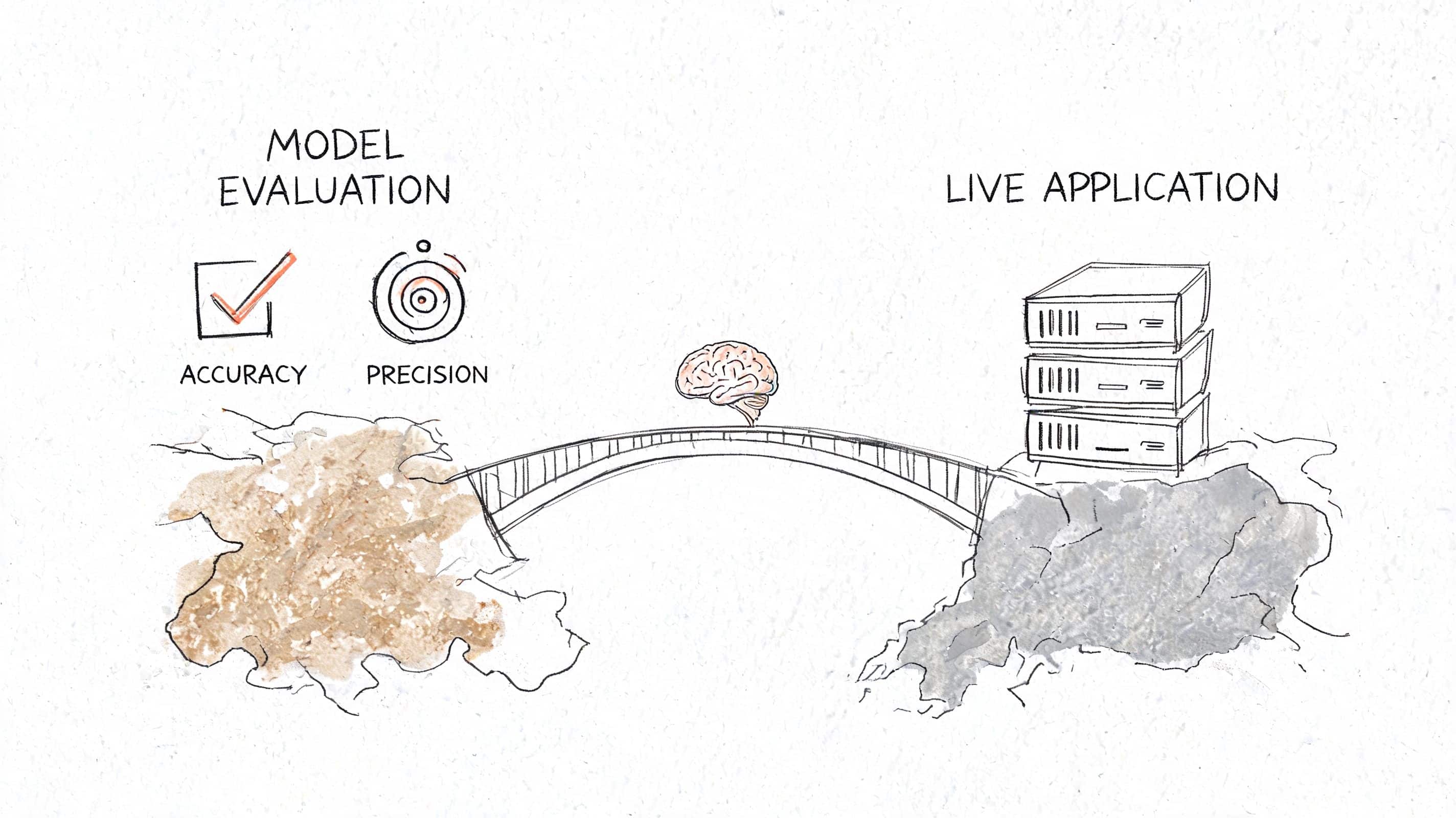

Evaluating and Integrating Your Java Model

A trained model sitting on a developer laptop is not a product feature. It becomes a product feature when the team can measure it, expose it safely, and decide how it behaves when production traffic gets messy.

Evaluate for the business decision

Teams often obsess over a single score and skip the decision context. That leads to models that look fine in offline tests and disappoint everyone in production.

For most classification problems, start with four basics:

- Accuracy when classes are reasonably balanced.

- Precision when false positives are expensive.

- Recall when missing a real event hurts more.

- F1 when precision and recall both matter.

A small Java utility can calculate those from prediction results and keep model review grounded in actual trade-offs:

int tp = 0, fp = 0, fn = 0, tn = 0;

for (int i = 0; i < actual.length; i++) {

if (predicted[i] == 1 && actual[i] == 1) tp++;

else if (predicted[i] == 1) fp++;

else if (actual[i] == 1) fn++;

else tn++;

}

double precision = tp / (double) (tp + fp);

double recall = tp / (double) (tp + fn);

double f1 = 2 * precision * recall / (precision + recall);

The code is simple. The discussion around it shouldn't be. A fraud team may tolerate more false positives than a marketing recommendation service. A healthcare workflow may need stronger review paths around low-confidence predictions.

Pick an integration pattern deliberately

For Java applications, two deployment patterns usually make sense.

Embedded model inside the application

This works well when prediction is tightly coupled to request handling and the model is lightweight enough to live in the same service.

Pros

- Low latency

- Fewer moving parts

- Easier local development for backend teams

Cons

- Application and model scale together

- Model updates may require full service releases

- Memory pressure can affect the whole service

Dedicated inference microservice

This is the better fit when multiple applications need the same model, or when model lifecycle and application lifecycle should be separated.

Pros

- Clearer boundary around model ownership

- Independent scaling

- Easier to support multiple model versions

Cons

- More operational overhead

- Another service to secure and monitor

- Network and serialization cost

There is a real performance trade-off here. Pure JVM pipelines can reduce prediction latency by 30 to 50 ms compared with calling Python models over REST, while still reaching 90 to 95% of the accuracy of equivalent Python models on tabular data because they avoid inter-process communication and serialization overhead (JVM versus Python deployment trade-offs).

If a prediction sits directly inside checkout, fraud review, or search ranking, extra network hops are rarely "just architecture." Users feel them.

A good default

For a team's first production ML feature, embedded inference is often the simplest path if the model is modest in size and the service boundary is already clear. Move to a dedicated inference service when model reuse, independent scaling, or release cadence starts to justify the added complexity.

Productionization Performance and Monitoring

A production model doesn't fail only when the service goes down. It also fails when input data shifts, business behavior changes, or a preprocessing bug changes the meaning of a feature. Those are the failures that cost teams time because they look like normal software until you inspect the predictions.

Tune the service before chasing exotic fixes

Start with plain engineering hygiene.

- Profile memory use. Large model artifacts, feature maps, and batch allocations can create avoidable garbage collection pressure.

- Warm critical paths. If the service handles latency-sensitive inference, make sure startup and early traffic don't pay all the initialization cost.

- Cache stable assets. Label maps, vocabularies, and lookup tables shouldn't be rebuilt on every request.

- Protect fallback behavior. If the model or feature call fails, define what the application does next. Returning no recommendation may be acceptable. Blocking checkout usually isn't.

Java teams sometimes focus so heavily on the model that they forget the service is still just software. Thread pools, object allocation, serialization format, and dependency weight all matter.

Monitor the model inside the JVM

For many Java ML projects, the distinction between dependable operation and guesswork often arises from monitoring practices. Teams using JVM-native monitoring that logs prediction metadata and feature distributions can detect concept drift 20 to 40 days earlier than teams relying only on batch validation (JVM-native monitoring and drift detection guidance).

That matters because drift isn't abstract. In retail, seasonality changes product demand. In fintech, transaction behavior changes with promotions, payroll cycles, and fraud tactics. In SaaS, account usage changes after pricing or onboarding updates.

A practical monitoring setup inside a Java service usually includes:

Prediction metadata logging

Record model version, timestamp, route, and a request correlation ID.Feature distribution snapshots

Track the shape of important inputs over time. A category that suddenly disappears or spikes is worth investigating.Outcome backfill

Where labels become available later, join them back to the prediction so the team can review real performance.Alerting on model-specific signals

Latency and error rate are not enough. You also need warnings for drift, skew, and unusual prediction distributions.

Log enough to investigate model behavior. Don't log so much raw feature data that storage, privacy, and compliance become your next outage.

What mature teams do differently

The strongest Java ML systems treat observability as part of the inference contract. They don't bolt it on after complaints arrive from support or product.

They also separate three concerns clearly:

| Concern | What to watch |

|---|---|

| Service health | Latency, error rate, resource usage |

| Data health | Missing values, schema mismatches, feature drift |

| Model health | Prediction mix, confidence behavior, post-hoc business outcomes |

That separation makes retraining decisions more rational. Sometimes the model needs replacement. Sometimes the upstream data changed. Sometimes the service is healthy and the business rule around the prediction is wrong. Monitoring should help you tell those apart quickly.

Managing Your AI Integration for Long-Term Success

A single model is manageable. A real product rarely stops at one. Soon the platform has recommendation logic, prompt-driven features, third-party AI APIs, internal classifiers, and business rules that decide when each should run. That's when technical debt stops looking like code and starts looking like operational fog.

Java teams usually handle that transition best when they create one administrative layer for AI behavior. That layer should track prompt versions, model or provider parameters, logs across AI interactions, and cost visibility. Without that, teams end up debugging through scattered dashboards, config files, and tribal knowledge.

This is also where AI stops being just an engineering concern. Product, compliance, support, and finance all need a cleaner view of what's happening. The broader opportunity is bigger than prediction services alone. This piece on unlocking smarter insights with AI is a good reminder that long-term value comes from connecting models, workflows, and decisions rather than treating AI as a one-off feature.

If you're modernizing an existing application, pair your Java ML work with a governance model that covers prompt changes, parameter access, logging standards, and release discipline. Teams that skip that layer often move fast for a sprint and then slow down for months.

For a business-focused view of how these efforts connect to product outcomes, Wonderment's perspective on machine learning for businesses is worth reading.

If you're planning machine learning in java and want help turning prototypes into maintainable production systems, Wonderment Apps can help. Their team works with organizations that need AI modernization without wrecking the reliability of the software they already depend on. They also offer an administrative prompt management system with a prompt vault and versioning, parameter management for internal data access, unified logging across integrated AI tools, and cost tracking so teams can manage experimentation without losing control. If your roadmap includes Java ML, AI-assisted features, or legacy platform modernization, it's worth scheduling a demo.