A team ships an AI-assisted pricing feature on Friday. By Monday, support has a queue full of strange totals, failed checkouts, and one bug that came down to a simple mismatch: a value that looked like a number arrived as text, flowed through the app, and broke at the worst possible point.

That kind of failure doesn't start with AI. AI just makes it easier to trigger. It starts with a deeper architectural choice about how your software handles data, where it catches mistakes, and how much uncertainty your runtime is allowed to absorb.

For CTOs, VPs of Engineering, and product leaders, static vs. dynamic typing isn't an academic language debate. It's a decision that affects release speed, production reliability, maintenance cost, and how safely you can integrate unpredictable model output into a structured application.

The Hidden Choice That Defines Your App's Future

Tiny type mistakes create expensive outcomes. A field expected to hold a number receives a string. A model returns a shape your frontend didn't expect. A backend service takes in the wrong kind of value without warning until a customer hits the exact path that exposes it.

That risk grows when you're modernizing legacy software, layering in AI features, and trying to ship quickly without breaking the systems that keep revenue moving. Typing strategy sits right in the middle of that tension. It determines whether your team catches mismatches before deploy or discovers them after customers do.

Leaders often focus on framework choice, cloud architecture, or release cadence first. Those matter. But typing decisions shape all of them, especially if you're still evaluating the broader technology stack for your software project.

Why this matters more in AI projects

AI integrations make data contracts messier, not cleaner. A traditional internal API usually returns a predictable structure because your own team controls both ends. An LLM or model-driven workflow can return useful output that's still inconsistent in format, naming, or completeness.

That means the old trade-off gets sharper:

- Move faster with flexibility: Dynamic environments help teams prototype prompts, pipelines, and experiments quickly.

- Reduce failure in critical paths: Static checks help teams block bad assumptions before they hit checkout, patient records, reporting, or billing.

- Control operational drift: The more AI-generated content or logic enters your system, the more important it becomes to define where ambiguity is allowed and where it isn't.

Practical rule: The closer code is to money, compliance, or customer trust, the less ambiguity you should tolerate.

This is why typing should be treated as a business control, not just a developer preference.

Static vs Dynamic Typing A Simple Explanation

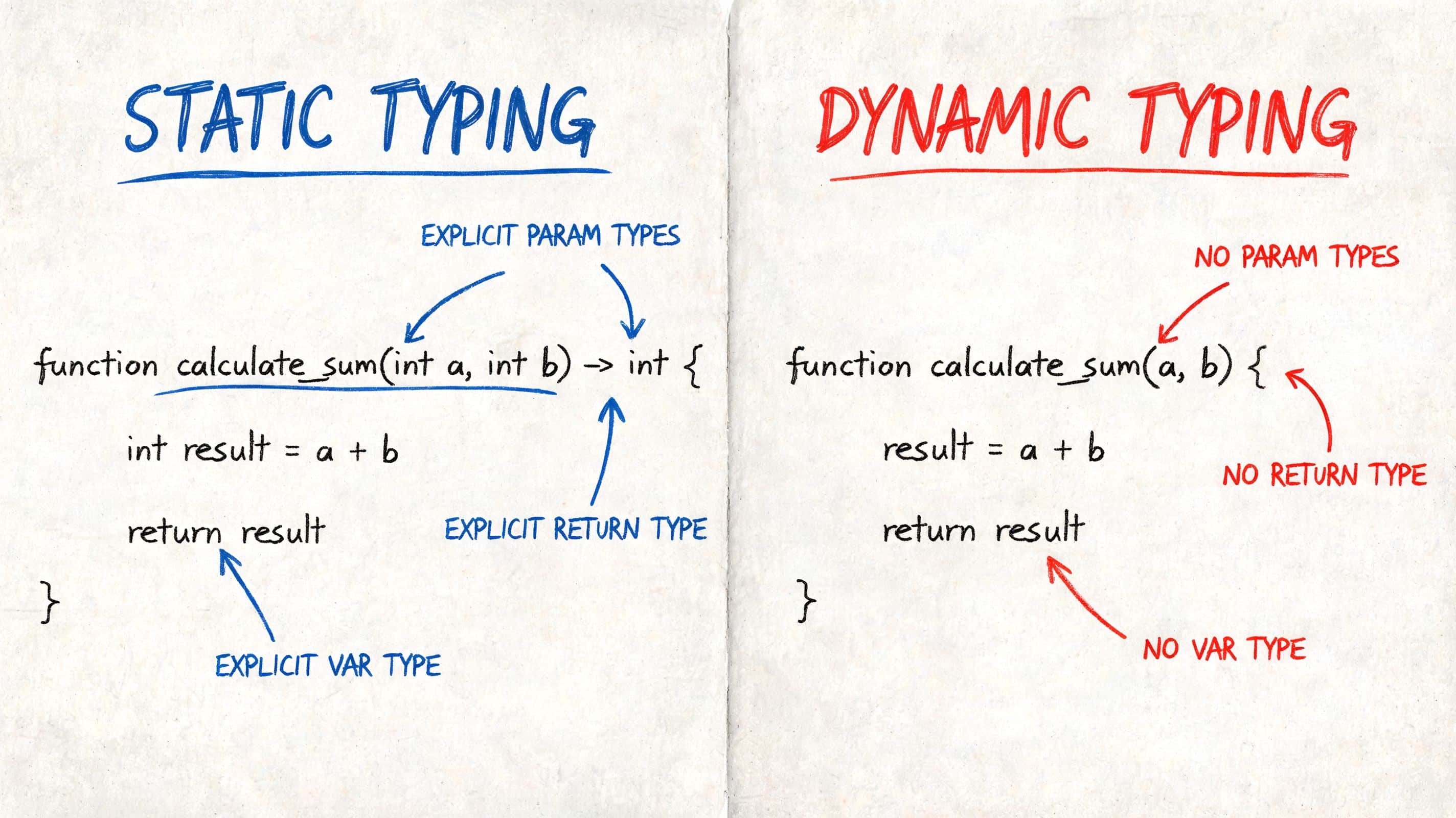

The core difference is timing. When does the system verify that a value is the kind of thing your code expects?

Static typing checks before the app runs

In a statically typed language, developers declare or constrain the kinds of values a variable, parameter, or return value can hold. The compiler checks those assumptions before the software runs.

Java, C#, and TypeScript are familiar examples. If a function expects a number and someone passes text instead, the build process or editor usually flags it before that code reaches production.

A good mental model is blueprint review. If an architect marks a load-bearing structure incorrectly, the inspector stops the plan before construction starts. The correction happens early, when it's still cheap.

Dynamic typing checks while the app runs

In a dynamically typed language, values carry their type information at runtime. The code doesn't insist on as many upfront declarations. JavaScript, Python, and Ruby are common examples.

That gives teams speed and flexibility. It also means some mistakes don't show up until a real execution path hits them. Sometimes your tests catch that. Sometimes a customer does.

The mental model here is building as you go. You can move quickly, adapt fast, and avoid upfront formality. But some structural issues aren't obvious until weight hits the wrong place.

A quick side by side view

| Decision point | Static typing | Dynamic typing |

|---|---|---|

| When errors surface | Before runtime, during compile or analysis | During runtime or tests |

| Best fit | Core systems, compliance-sensitive code, long-lived platforms | Prototypes, scripting, data exploration, fast experiments |

| Team benefit | Safer refactors, clearer contracts | Faster iteration, less upfront ceremony |

| Typical risk | More upfront structure | More runtime surprises |

Neither approach is universally superior. The better question is where your team needs certainty and where it needs freedom.

Static typing optimizes for prevention. Dynamic typing optimizes for adaptability.

For most app leaders, that framing is more useful than language tribalism.

Seeing the Difference in Real Code

Abstract explanations help. Code makes the trade-off obvious.

JavaScript and TypeScript

A dynamic example in JavaScript can look clean and harmless:

function calculateTotal(price, tax) {

return price + tax;

}

console.log(calculateTotal("$100", 5));

That code runs. It also produces the wrong result for the business rule. Instead of numeric addition, the app may concatenate values into something like "$1005" depending on how the values flow through the runtime.

The bug isn't hidden because the code is complicated. It's hidden because the function accepts anything.

Now compare that to TypeScript:

function calculateTotal(price: number, tax: number): number {

return price + tax;

}

console.log(calculateTotal("$100", 5));

The editor and build tooling object immediately. The call site violates the declared contract. The mistake gets blocked before the feature ships.

Python and Java

The same pattern appears in backend work.

Python gives developers a lot of freedom:

def apply_discount(total, discount):

return total - discount

print(apply_discount("100", 5))

That flexibility is useful when you're exploring data or moving quickly through a prototype. But the function doesn't defend itself much. The problem appears when the runtime reaches the wrong input.

Java forces the contract up front:

public class Pricing {

public static double applyDiscount(double total, double discount) {

return total - discount;

}

public static void main(String[] args) {

System.out.println(applyDiscount("100", 5));

}

}

That doesn't compile because the input type is wrong.

Why leaders should care about this difference

A statically typed system creates friction earlier. That can feel slower during initial development. But it moves defect discovery to a cheaper phase.

A dynamically typed system removes friction early. That often helps with experimentation, especially in AI workflows, analytics tooling, and internal scripts. But it pushes more responsibility onto tests, runtime safeguards, and disciplined engineering habits.

Here's the practical business impact:

- Product teams get faster spikes and prototypes with dynamic tooling.

- Platform teams get stronger contracts and safer refactoring with static tooling.

- AI teams usually need both, because experimentation and reliability live in the same roadmap.

A language doesn't create discipline for your team. It shapes where discipline must show up.

If you choose dynamic typing, your team must be excellent at tests, validation, and runtime guards. If you choose static typing, your team must avoid overengineering type systems that slow delivery without reducing meaningful risk.

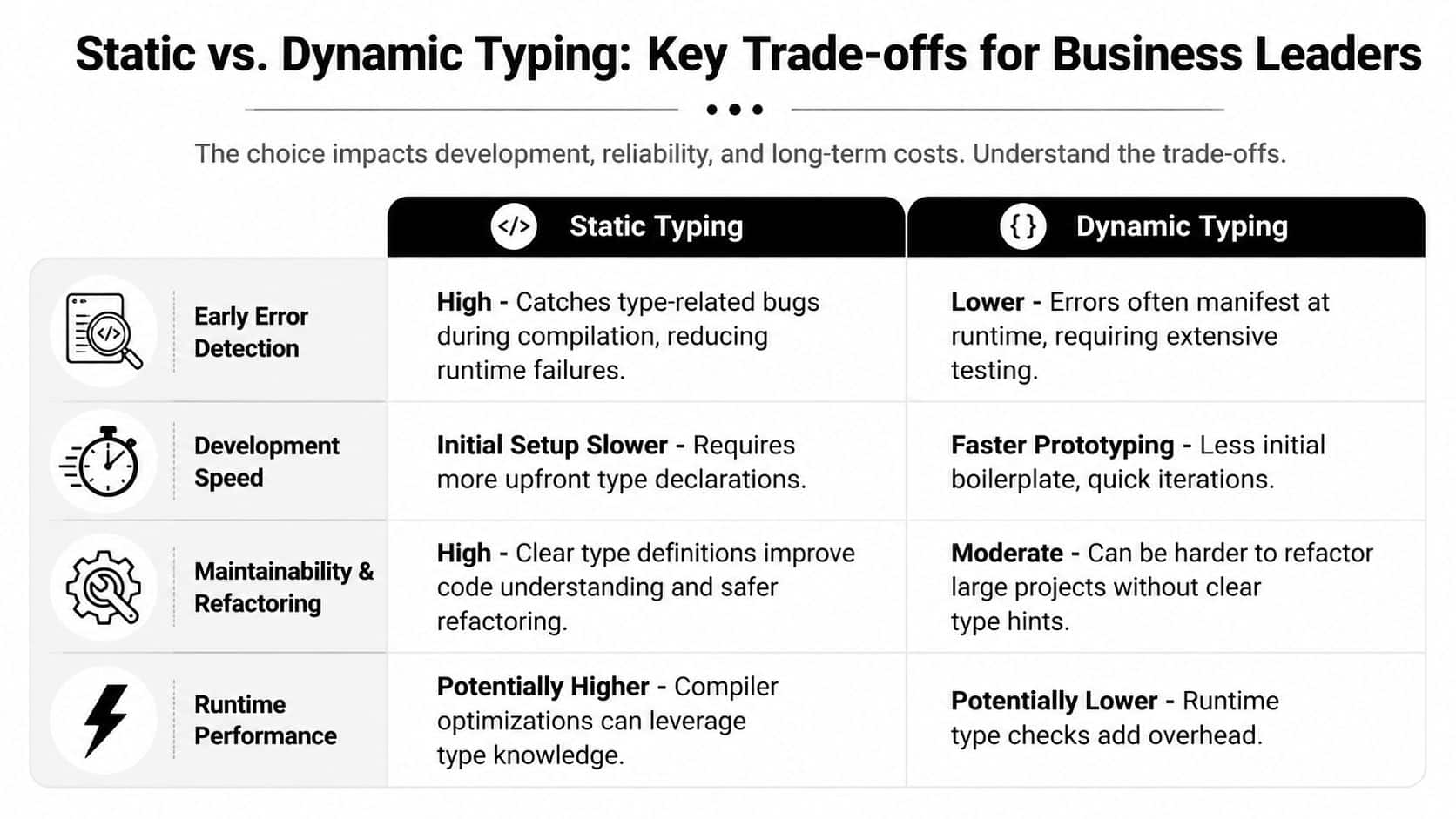

Key Trade-offs for Business Leaders

Typing strategy sets where your organization pays for mistakes. You either spend more effort defining constraints before code ships, or you spend more effort catching bad assumptions in tests, monitoring, and production.

That choice has sharper consequences in AI products. AI features introduce uncertain inputs, evolving schemas, vendor API changes, and output that looks valid until it hits a business rule. For a CTO or VP Engineering, the key question is not which typing model is more elegant. It is which one keeps delivery speed, reliability, and operating cost aligned with the product you are trying to build.

| Business concern | Static typing | Dynamic typing |

|---|---|---|

| Development speed | More upfront structure | Faster early iteration |

| Reliability | Stronger compile-time protection | More runtime validation required |

| Performance | Often better optimization potential | Often more overhead at runtime |

| Long-term maintenance | Clearer contracts for growing teams | Easier to start, harder to govern at scale |

Development speed

Dynamic typing usually wins the first sprint. Teams can test ideas quickly, especially in AI proof-of-concepts, internal tools, prompt pipelines, and data cleanup jobs where requirements are still moving.

That speed is real. So is the bill that can follow.

In practice, dynamic code shifts more work into runtime checks, contract testing, and operational debugging once a prototype becomes a product. If an AI workflow pulls customer data, calls multiple model providers, and writes results back into core systems, every loosely defined payload becomes a possible failure point. Teams still move fast, but more of that effort goes into verifying assumptions after code is written.

Static typing slows early motion and often improves later execution. Engineers make interface decisions sooner, which reduces ambiguity during handoffs, refactors, and parallel development across teams.

Reliability and maintenance

Reliability is where the business case gets clearer. Static typing helps teams catch contract mismatches before deployment, which matters more as systems gain more owners, more integrations, and more AI-powered paths.

I see this most often in products that started as a fast experiment and then became operational infrastructure. The first version works. Six months later, the product has billing rules, audit needs, fallback behavior between model vendors, and support teams depending on predictable outputs. At that point, weak contracts stop being a developer preference issue and become an uptime and cost issue.

For business leaders, the downstream impact is straightforward. Production defects interrupt engineering work, expand QA cycles, increase support load, and delay roadmap commitments. That is one reason long-term architecture choices shape the true cost of software development over time, not just the cost of getting version one live.

Dynamic typing can still work well here. It just requires stronger discipline around schema validation, integration tests, observability, and code review standards. If that discipline is uneven, maintenance cost rises fast.

Performance

Performance should be judged by workload, not ideology. Statically typed languages often give compilers and runtimes more information to optimize execution. That can matter in services with high throughput, lower latency targets, or expensive AI orchestration layers where inefficient glue code drives infrastructure spend.

A useful example comes from the University of Twente research paper on GDScript typing performance. The results varied by operation and data structure. Some typed cases improved performance, while others slowed down. That is a practical reminder to benchmark the path that affects customer experience or cloud cost, rather than assuming one typing model always wins.

For AI systems, this usually shows up outside the model itself. Data transformation, request validation, caching, concurrency control, and fallback logic often become the bottleneck before model inference does.

Security and testing

Typing does not validate business correctness. It validates a narrower class of assumptions.

That distinction matters in AI features. A model response can match the expected type and still be unsafe, misleading, non-compliant, or expensive to process at scale. A statically typed service may confirm that a summary object contains the right fields. It cannot confirm that the summary is legally safe to show a customer, or that the model did not fabricate a key fact.

Dynamic systems have the same exposure, with less protection around interfaces. Static systems reduce one category of failure. They do not remove the need for output validation, human review paths, policy checks, and careful test design around real-world edge cases.

For leadership teams, the practical takeaway is simple. Choose static typing when reliability, team scale, and integration complexity are likely to dominate. Choose dynamic typing when speed of learning matters more than hard guarantees, and fund the testing and runtime safeguards needed to support that choice.

Beyond the Binary Gradual and Hybrid Typing

The best answer for many teams isn't pure static or pure dynamic. It's a layered approach.

Gradual typing solves real modernization problems

Gradual typing lets teams add stronger type checks to codebases that began in dynamic languages. TypeScript on top of JavaScript is the most familiar example. Python teams often use type hints and tooling such as Mypy to introduce more structure without abandoning the ecosystem that makes Python attractive.

This matters in modernization work. Rewriting an entire platform into a new language is often the most disruptive option. Adding type safety to the parts that carry the most business risk is usually more practical.

A common pattern looks like this:

- Critical paths get strict typing: payments, auth, order orchestration, audit logging

- Exploratory areas stay flexible: model experimentation, internal scripts, data shaping

- Interfaces become explicit: API contracts, event payloads, and service boundaries get tighter even if the whole codebase doesn't

That approach reflects how real teams work. Different parts of the same product tolerate different levels of ambiguity.

Hybrid typing is rising for a reason

Recent market movement supports that middle ground. Unosquare's guide to static and dynamic typing reports that hybrid TypeScript usage is up 47% year over year in fintech and SaaS, and 62% of AI/ML teams now prefer hybrid typing for data-powered apps.

Those numbers make sense. AI features create a split-screen reality inside one product. You need flexibility where data and prompts evolve quickly. You also need compile-time safety where customer-facing workflows, compliance rules, and scaling constraints can't be left to runtime luck.

Strong and weak typing aren't the same debate

Leaders also hear "strongly typed" and "statically typed" used as if they mean the same thing. They don't.

- Static vs dynamic describes when type checks happen.

- Strong vs weak describes how permissive a language is about mixing or coercing values.

That distinction matters because some costly bugs come from coercion behavior rather than the presence or absence of declarations. Teams should evaluate both the language and the runtime habits it encourages.

Hybrid systems usually win when the business has both unstable inputs and stable obligations.

That's a useful description of most AI-enabled products today.

Your Decision Framework Industry Recommendations

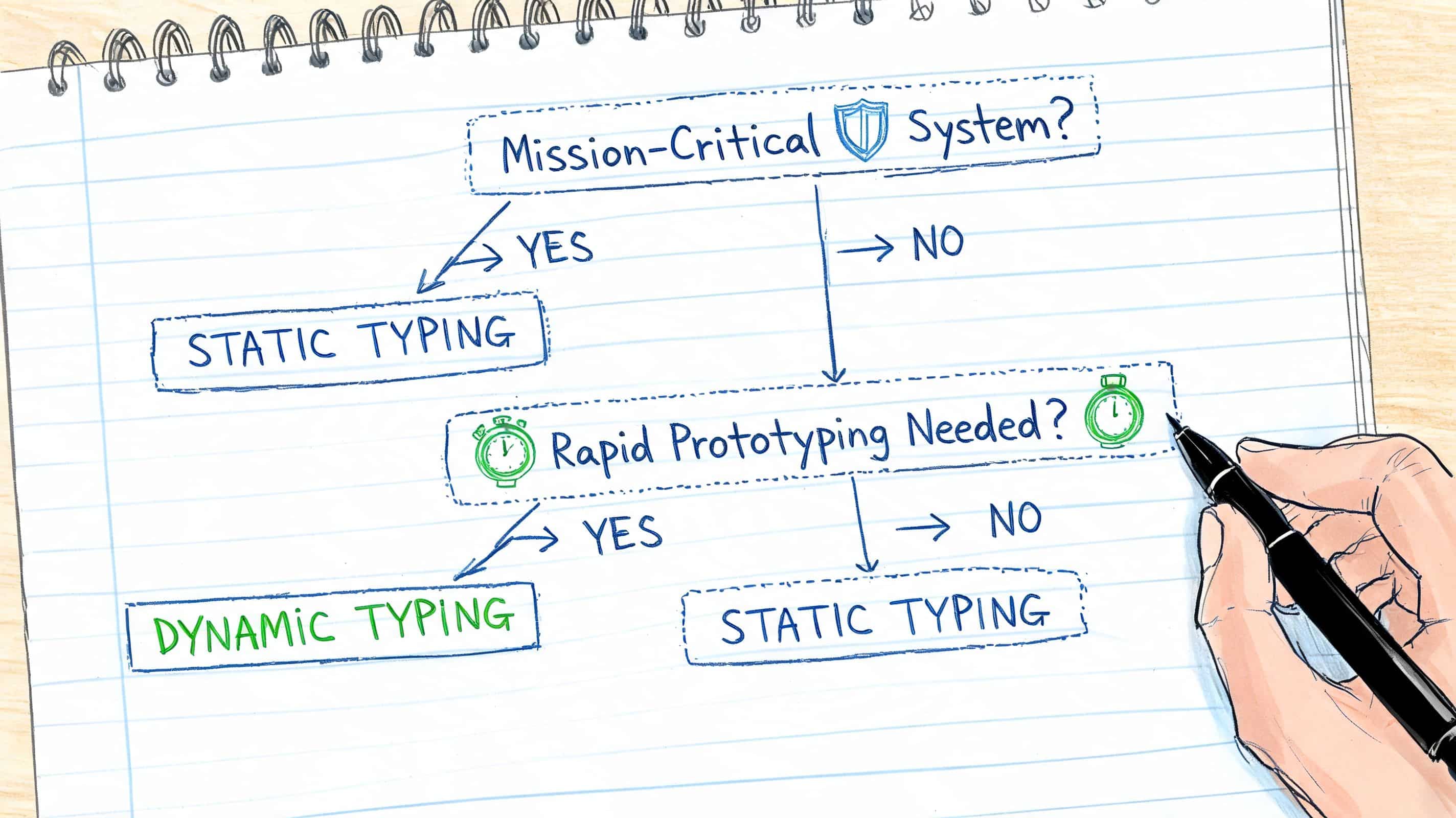

Typing strategy should match operating risk, change velocity, and the cost of failure. For AI products, add one more filter. Ask where model output can create downstream damage if it is wrong, malformed, or inconsistent with yesterday's response.

A practical decision usually starts with one question. What costs more in your business: slower experimentation now, or runtime mistakes later?

Start with these questions

Use this checklist with engineering, product, security, and platform leads before you commit:

- Is failure expensive? If a bug can affect payments, health data, identity, reporting, approvals, or legal compliance, bias toward static typing.

- Will this system carry revenue or operational load for years? Long-lived code benefits from explicit contracts, safer refactors, and lower onboarding cost for new teams.

- Are you still testing the shape of the product? Dynamic tools can shorten the path from idea to working prototype.

- How many teams and vendors will touch this code? Shared ownership raises the value of type clarity at service boundaries.

- Will AI output enter a customer-facing or business-critical workflow? Define the point where probabilistic output becomes validated application state.

- What is your tolerance for tech debt? If the answer is low, set typed boundaries early and reduce tech debt before it turns into delivery drag.

Industry-specific recommendations

Fintech and healthcare

Use static typing for core systems by default.

These products run on trust, traceability, and predictable behavior under change. Ledger logic, claims processing, eligibility rules, audit trails, and medication workflows all benefit from stronger compile-time guarantees. AI can still play a role in document intake, summarization, or triage, but the handoff into persisted records and decision logic should be typed and validated aggressively. That reduces incident risk, rework, and compliance exposure.

Ecommerce and media

Use a hybrid model.

Keep checkout, identity, inventory, pricing, subscriptions, and order state on the stricter side. Give more flexibility to recommendation services, content operations, experimentation pipelines, and internal tooling where inputs change often and speed matters more than perfect upfront modeling. For teams balancing frontend and backend choices together, this guide to mobile frameworks for founders is useful because framework decisions affect how much type safety you can preserve from API contracts through the user interface.

AI adds another layer here. Product copy generation, search relevance, and personalization can move quickly with looser workflows. Cart logic, refunds, and fulfillment status should not.

Public sector and nonprofits

Gradual typing is often the practical choice.

These teams usually inherit aging systems, changing service rules, limited budgets, and long procurement cycles. A full rewrite is rarely the best use of capital. Start by adding typed contracts around high-risk workflows, external integrations, and reporting paths. Expand from there as requirements settle and funding allows. That approach improves reliability without forcing a costly architecture reset.

What usually fails

A few patterns create predictable problems across industries:

- Pure dynamic typing in transaction-heavy systems: teams ship quickly, then slow down as testing, debugging, and incident review absorb more time

- Pure static typing during early product discovery: teams spend too much effort modeling ideas that have not earned permanence

- Weak boundaries between services: typed code inside one service does little if unchecked payloads move across APIs, queues, and webhooks

- AI features without contract enforcement: model output may look structured while still violating business rules, schema expectations, or cost controls

The best choice is rarely ideological. It is a budgeting decision, a reliability decision, and an AI governance decision wrapped into one.

Build Apps That Last From Code to Cost Control

Typing is a strategic lever. It affects where your team catches mistakes, how safely you can scale, and how much operational drag builds up as the product grows.

For AI integration work, the stakes get higher because model output introduces uncertainty by default. Dynamic workflows are valuable for experimentation, prompt iteration, and data shaping. But once AI output touches user-facing logic, billing, approvals, records, or analytics, uncertainty needs a boundary.

That boundary isn't created by language choice alone. Teams also need disciplined prompt versioning, reliable parameter control, clear logging across model interactions, and visibility into cumulative spend. Without that, even a well-typed application can become difficult to govern once AI features spread across products and teams.

A good rule is simple:

- Use typing to control code contracts

- Use validation to control runtime inputs

- Use operational tooling to control AI behavior, traceability, and cost

Security belongs in the same conversation. If your organization is introducing AI into a SaaS product, this guide to SaaS application security is a practical companion read because runtime reliability and security posture often fail at the same integration seams.

Long-term health also depends on reducing complexity before it hardens into fragility. That's why leaders should connect architecture decisions like typing to a broader plan for reducing tech debt in evolving software systems.

The takeaway is straightforward. Static vs. dynamic typing isn't a purity test. It's a design decision about where your business wants speed, where it demands certainty, and how deliberately it manages the boundary between the two.

Wonderment Apps helps teams modernize software for AI without losing control of reliability, integrations, or spend. If you're evaluating typing strategy, AI feature architecture, or the operational risks of model-driven workflows, Wonderment Apps can show you how its prompt management system supports prompt versioning, parameter control, cross-model logging, and cumulative cost visibility in a live demo.