A release goes live. The feature works exactly as engineering built it. QA signed off. Stakeholders still hate it.

That’s the kind of failure that frustrates product teams most, because nobody was lazy and nobody was careless. The team simply built the wrong thing well.

A behaviour driven development framework exists to stop that failure mode. It gives product, engineering, and QA a shared way to describe behaviour before implementation starts. That sounds simple. In practice, it changes how teams discuss requirements, define edge cases, and turn business intent into software that behaves as expected.

When teams start adding AI features, the need for that shared language gets even sharper. A vague requirement is already risky in a normal feature. In an AI workflow, vague inputs can produce inconsistent outputs, confused testing, and long review cycles. The more complex the system, the more expensive ambiguity becomes.

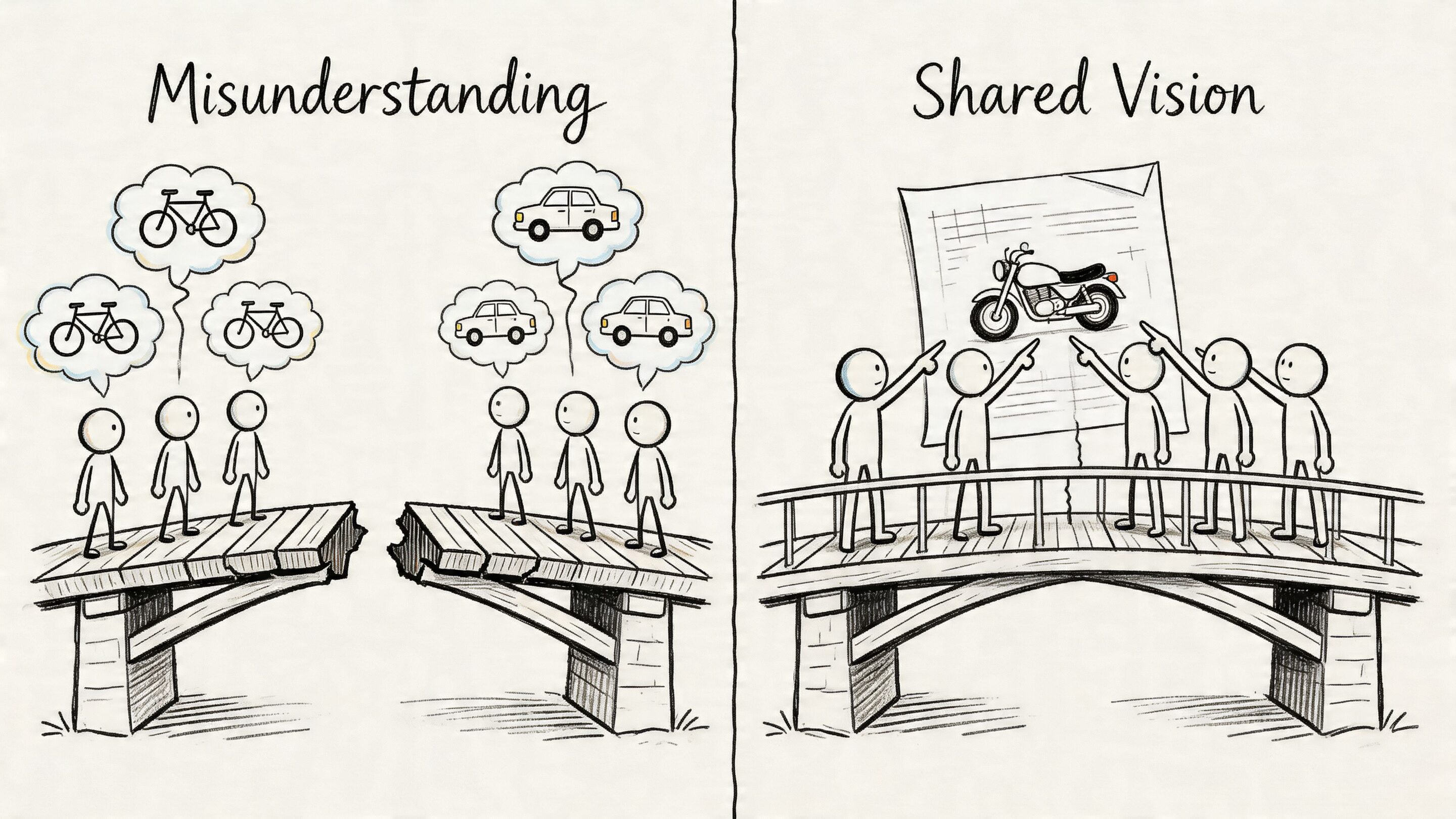

From Misunderstanding to Shared Vision with BDD

A product manager asks for “smart checkout recommendations.” Engineering hears “show related products before payment.” Marketing means “increase average order value without interrupting checkout.” Compliance assumes “don’t surface restricted combinations.” QA gets the ticket after all those assumptions have already hardened into code.

Launch day arrives. The feature technically works. It also annoys users, conflicts with business rules, and triggers internal debate that should have happened two sprints earlier.

That story is common because teams often don’t fail on execution first. They fail on interpretation first.

What BDD is really solving

Behaviour-Driven Development, or BDD, is often introduced as a testing method. That’s true, but incomplete. Its real value is that it creates a structured conversation before code is written.

Instead of saying, “build recommended products,” the team says something closer to this:

- Given a shopper has added running shoes to the cart

- When the checkout page loads

- Then the system shows compatible accessories

- And it doesn’t show products blocked by merchandising rules

That small shift matters. It turns a fuzzy request into observable behaviour.

BDD works best when teams treat scenarios as a conversation artifact first and an automation artifact second.

A strong product team already knows this instinctively. The best teams keep translating between customer needs, business constraints, and implementation detail. If you want a good non-BDD example of that alignment mindset, this piece on high-performing tech teams and top product managers building a bridge to success captures the same leadership habit from another angle.

Why more teams are taking it seriously

This isn’t just a niche QA practice anymore. The BDD testing tools market was valued at $120 million in 2024 and is projected to reach $300 million by 2033, growing at a CAGR of 10.5%, according to this BDD market analysis from TestEvolve.

That matters because markets don’t expand like that unless teams are finding practical value. In sectors like fintech, healthcare, and e-commerce, requirement mistakes are expensive. A behaviour driven development framework gives teams a disciplined way to catch those mistakes while the fix is still a conversation, not a rewrite.

Where teams usually get confused

Readers often mix up three different things:

- BDD is not just writing tests. It starts with collaborative discovery.

- BDD is not just Gherkin syntax. The words matter less than the shared understanding behind them.

- BDD is not only for QA. Product managers, analysts, developers, and testers all shape the scenarios.

When teams understand that, BDD stops feeling like extra ceremony and starts feeling like risk prevention.

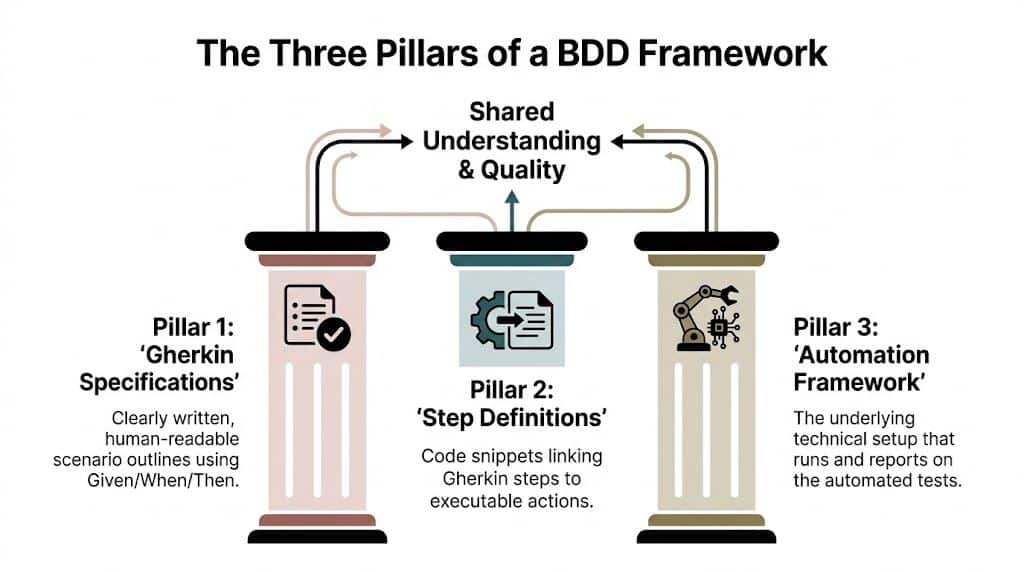

The Three Pillars of a BDD Framework

A behaviour driven development framework has a lot of moving parts, but most of it becomes easier if you think about cooking.

The Gherkin specification is the recipe card.

The step definitions are the chef’s techniques.

The automation framework is the kitchen equipment that performs the work and reports the outcome.

If any one of those pieces is weak, the dish is unreliable.

Gherkin specifications

This is the part most non-technical stakeholders see first. Gherkin uses a simple structure:

- Given describes the starting context

- When describes the action

- Then describes the expected outcome

Example:

Scenario: Returning customer applies a valid discount code

Given a returning customer has items in the cart

When the customer applies a valid discount code

Then the order total reflects the discount

This format is useful because it forces precision. You can’t hide behind broad wording like “supports discounts properly.” You have to describe what “properly” means.

Step definitions

A Gherkin line is readable by humans, but software still needs instructions. That’s where step definitions come in.

If the scenario says:

When the customer applies a valid discount code

The step definition contains the code that performs that action in the app or API test layer. It might open a page, call a service, submit data, or verify a response.

Product people don’t need to write step definitions, but they should understand the relationship. One plain-language step maps to executable logic. That’s how a business-readable statement becomes a working automated check.

Practical rule: If a scenario only makes sense to engineers, it’s not doing its job. If it only makes sense to product people and can’t be automated, it’s unfinished.

Automation framework

The third pillar is the runner and supporting tooling. This layer executes the scenarios, links them to step definitions, and reports pass or fail. Tools like Cucumber, SpecFlow, and Behave live here.

This part is less visible in planning meetings, but it’s what turns documentation into repeatable validation. Without automation, scenarios are useful but manual. With automation, they become part of delivery discipline.

The process behind the pillars

Cucumber’s BDD guidance describes a three-phase cycle of Discovery, Formulation, and Automation, along with four quality pillars: Conservation of Steps, Conservation of Domain Vocabulary, Elimination of Technical Vocabulary, and Conservation of Proper Abstraction. That same guidance also notes a case where BDD automation covered 68 percent of regression test suites. You can read that in Cucumber’s documentation on BDD.

Here’s the plain-English version of those ideas:

- Discovery means the team talks through examples before building.

- Formulation means those examples become clean scenarios.

- Automation means the scenarios become executable checks.

And the quality pillars push teams to keep scenarios readable, business-focused, and stable over time.

Why this becomes living documentation

Teams often have three documents that drift apart:

- The original requirement

- The test cases

- What the system does

BDD tries to collapse those into one source of truth. When a scenario is both understandable and executable, it does more than verify quality. It preserves intent.

That’s why the three pillars matter. They don’t just support testing. They support memory.

Choosing Your BDD Framework A Practical Comparison

Once a team agrees on the practice, the next question is usually more tactical. Which tool should we use?

Often, teams overcomplicate things. Don’t start with feature lists. Start with your current stack, your developer habits, and the people who will maintain the suite six months from now.

Start with team fit, not fashion

A Java team that already uses Cucumber will make different choices than a .NET team living in Visual Studio. A Python-heavy SaaS platform may prefer Behave because it aligns with the team’s language and test ecosystem. A PHP shop may look at Behat because it fits existing patterns.

The “best” framework is usually the one your team can adopt without creating a second culture inside engineering.

Comparison of Popular BDD Frameworks

| Framework | Primary Language/Platform | Key Feature | Best For |

|---|---|---|---|

| Cucumber | Java, JavaScript, Ruby and multi-language ecosystems | Widely recognized Gherkin support and broad ecosystem compatibility | Teams that want a mature, widely used BDD option across multiple stacks |

| SpecFlow | .NET and C# | Strong fit with Visual Studio and Microsoft-centric workflows | Fintech, enterprise, and internal platform teams built around .NET |

| Behave | Python | Simple Python-based Gherkin execution | SaaS products, data-heavy services, and Python-first teams |

| Behat | PHP | Gherkin-based testing for PHP web applications | PHP teams, especially web application projects |

| Gauge | Multi-language support | Flexible specification style and modular design | Teams that want a lighter-weight alternative and cross-stack flexibility |

| Jasmine | JavaScript | BDD-style syntax often used in front-end testing | Front-end teams focused on component and UI behavior in JavaScript |

What to evaluate before you choose

A short checklist usually surfaces the right answer faster than a long debate:

- Language alignment: Pick a framework that matches the language your developers already use daily.

- IDE comfort: Developers are more likely to maintain tests that fit naturally into their editor and build flow.

- CI integration: If the framework creates friction in your pipeline, adoption will stall.

- Community maturity: Strong examples, plugins, and troubleshooting resources reduce setup pain.

- Readability for non-developers: Some tools support the collaboration side better than others in practice.

A few grounded recommendations

If your company runs heavily on .NET, SpecFlow is usually the natural starting point. It keeps the stack coherent, and that matters more than novelty.

If your team spans multiple services and languages, Cucumber often becomes the common denominator. It has broad familiarity, and that reduces the cost of onboarding.

If your backend and automation culture already center on Python, Behave is often easier to introduce than trying to force a different ecosystem onto the team.

Tool choice should reduce translation work, not create more of it.

What product leaders should ask engineering

Non-technical stakeholders don’t need to compare plugin architectures, but they should ask useful questions:

- Will this framework let product and QA read scenarios easily?

- Can the team run scenarios in the delivery pipeline without awkward workarounds?

- Who will own step definition quality and scenario refactoring?

- Does this fit our current platform, or are we creating extra maintenance?

Those questions usually reveal whether the team is choosing a framework for practical reasons or because someone saw a conference demo.

Don’t confuse adoption with success

Teams sometimes celebrate too early because they installed a tool, generated sample feature files, and ran a happy-path demo. That’s setup, not success.

Success looks more ordinary. People use the scenarios during backlog refinement. Developers trust them. QA keeps them current. Product managers read them without needing translation. When that happens, the framework has become part of the product operating system.

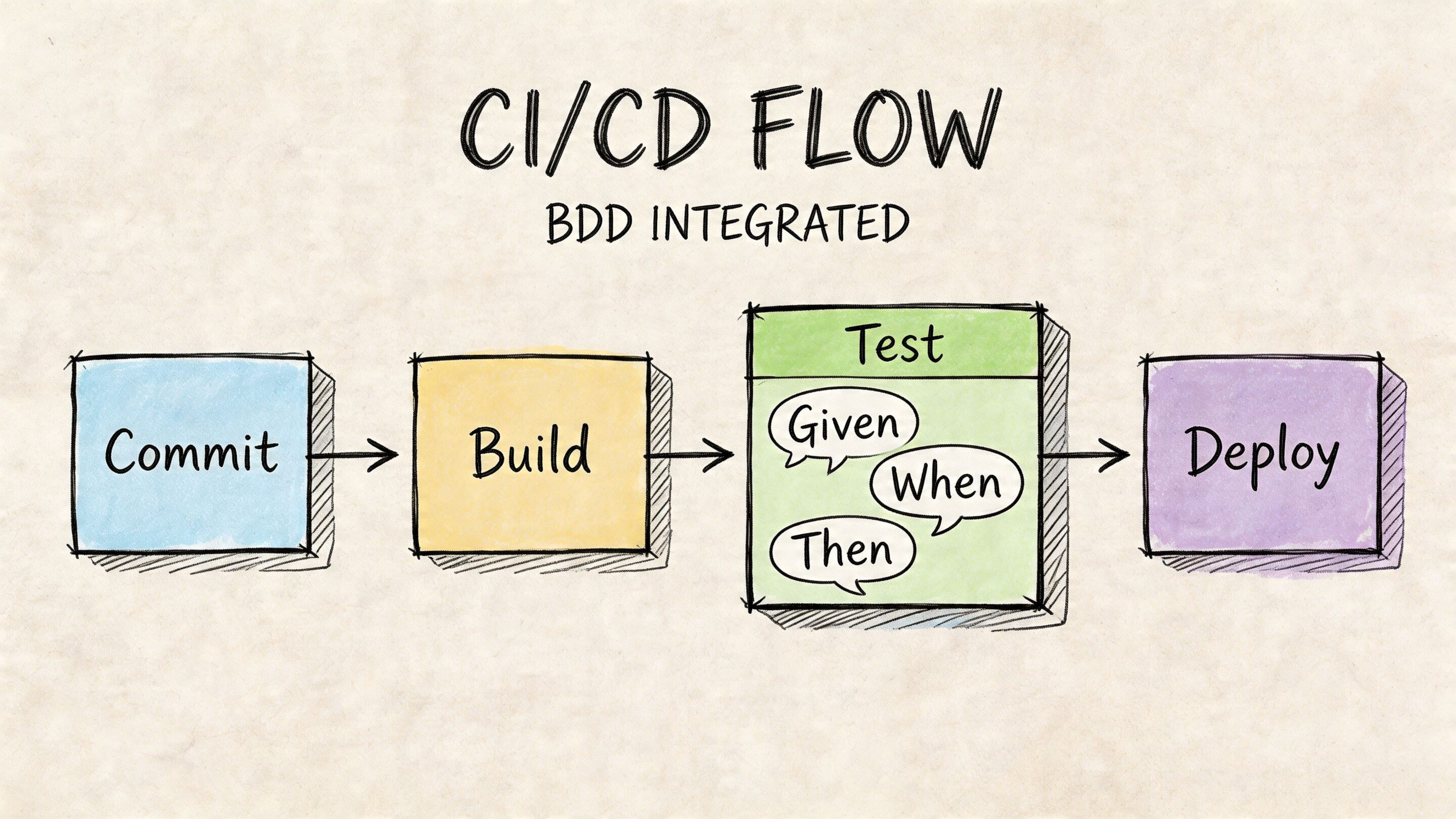

Architecting BDD for Modern CI/CD Pipelines

BDD becomes valuable at scale when it lives inside the delivery pipeline, not in a forgotten test folder that only QA touches before release.

A well-run team stores feature files in version control next to the application code. That keeps behaviour, implementation, and history connected. When someone changes a requirement, the corresponding scenario changes in the same workflow as the code itself.

What the flow looks like in practice

A typical implementation looks like this:

- A product, QA, and engineering conversation defines behaviour

- The team writes or updates Gherkin feature files

- Developers implement or update step definitions

- The code and scenarios are committed together

- The CI pipeline runs the relevant BDD checks

- A passing result becomes a gate for promotion

That gate is the important part. It means the build doesn’t move forward solely because the code compiles. It moves forward because the expected business behaviour still holds.

Why this model works

BDD frameworks use a Gherkin-based DSL architecture that acts as a communication bridge between technical and non-technical contributors. That design helps teams create living documentation that stays synchronized with the codebase through CI pipelines, creating a single source of truth for specification, tests, and documentation. That’s especially useful in regulated environments, as explained in this overview of BDD architecture and Gherkin.

In practical terms, that means:

- The product team sees behaviour in plain language

- Developers see executable mappings

- QA sees repeatable validation

- Release managers see a clearer promotion signal

Pipeline patterns that keep feedback useful

Not every BDD scenario should run at the same point in the pipeline. Mature teams usually split them by purpose.

- Fast smoke scenarios: Run on every commit or pull request

- Core business flows: Run before merge or deploy

- Broader regression behaviour: Run on scheduled or environment-based triggers

If you want to compare how teams structure different automation flows, these CI/CD pipeline examples are useful for seeing where behavioural checks fit among build, test, and deploy stages.

A scenario that takes too long to run or fails for unstable reasons won’t protect quality. It will train the team to ignore it.

A simple architecture rule

Keep Gherkin files business-facing.

Keep step definitions implementation-aware.

Keep pipeline reporting visible to the whole team.

That separation helps avoid a common mess where feature files start leaking UI selectors, internal object names, or debugging language that only one engineer understands.

Teams that want stronger release discipline often pair BDD with broader delivery rules like branch policies, environment parity, and deployment checks. This guide to CI/CD pipeline best practices fits well with that operational layer.

Where teams go wrong

BDD in CI/CD fails when teams treat every scenario as an end-to-end browser test. That creates slow suites, noisy failures, and developer resentment.

A healthier pattern is layered automation. Some scenarios validate API behaviour. Some check domain logic. A smaller set verifies full user journeys. The business language can stay consistent even while the technical execution happens at different layers.

That’s how a behaviour driven development framework stays useful instead of becoming ceremonial overhead.

Writing Effective BDD Scenarios Examples for Product Teams

Good BDD scenarios are concrete. They don’t read like legal contracts, and they don’t read like code comments.

The easiest way to learn this is by looking at examples that product teams would discuss in refinement.

Example one e-commerce checkout

Feature: Apply a discount code during checkout

Scenario: Shopper applies a valid discount code to an eligible cart

Given a shopper has a cart containing eligible items

And the cart subtotal meets the discount rules

When the shopper applies the code "SAVE10"

Then the system accepts the discount code

And the order summary shows the discounted total

Why this works:

- It describes one business behaviour

- It uses domain language like “cart,” “eligible items,” and “order summary”

- It avoids implementation detail such as button IDs or UI layout

Example two fintech alerting

Feature: Notify customers about unusual account activity

Scenario: Customer receives an alert for an unusual transaction

Given a customer has transaction alerts enabled

When the system detects a transaction outside the customer's normal activity pattern

Then the system creates an account alert

And the customer can review the alert in the app

This is strong because the scenario describes the observable outcome, not the internal fraud model. Product, compliance, and engineering can all discuss the behaviour without getting trapped in algorithm language.

Example three healthcare booking

Feature: Book a patient appointment

Scenario: Patient books an available appointment slot

Given a patient is signed in

And a clinician has an available appointment slot

When the patient selects the slot and confirms the booking

Then the appointment is reserved for the patient

And the patient sees a booking confirmation

This scenario stays readable because it focuses on the result the user cares about. It doesn’t try to encode scheduling engine mechanics.

What teams should copy from these examples

Strong scenarios usually share a few traits:

- One behavior at a time: Don’t mix booking, payment, reminders, and cancellation into one scenario.

- Concrete language: Use real terms from the business domain.

- Observable outcomes: Write what the user or system can verify.

- Minimal technical wording: Save technical detail for step definitions.

If your team needs a refresher on sharpening requirements before they ever become Gherkin, this guide to acceptance criteria for user stories is a useful companion.

A bad example and a better rewrite

Bad:

Scenario: Checkout works correctly

Given the user is on checkout

When they do the process

Then the right thing happens

This sounds harmless, but it’s empty. Nobody can automate it well, and nobody can challenge assumptions inside it.

Better:

Scenario: Shopper places an order with a saved shipping address

Given a signed-in shopper has items in the cart

And the shopper has a saved shipping address

When the shopper confirms the order

Then the order is submitted successfully

And the shopper sees an order confirmation page

Write scenarios so a product manager can review them, a tester can validate them, and a developer can automate them without rewriting the meaning.

For teams that want the quality side to stay disciplined, these QA tips for test case planning processes help connect specification quality to broader test planning.

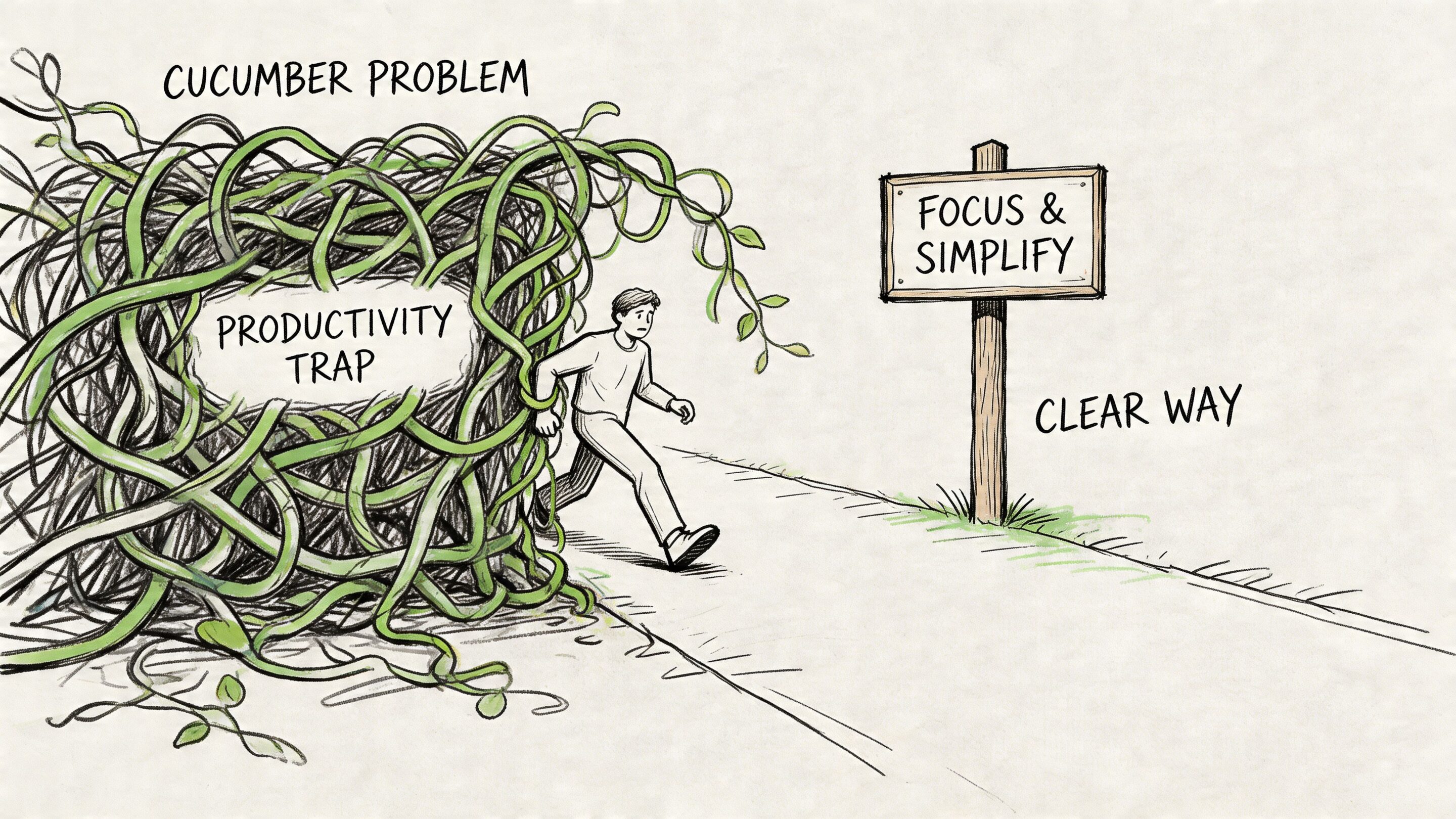

Avoiding the BDD Productivity Trap Common Pitfalls

BDD can improve delivery. It can also slow teams down when they use it badly.

That’s the uncomfortable truth. A behaviour driven development framework is not automatically efficient. The same discipline that creates clarity can create drag if the team turns it into paperwork.

The Cucumber problem

One of the best-known BDD failure modes is the Cucumber problem. Teams become obsessed with writing feature files and lose sight of the actual goal, which is shared understanding.

Wikipedia’s BDD overview notes that the two-step process can be “more laborious for developers” and that a focus on tools can distract from collaboration. It also notes maintenance burdens and un-reusable scenarios as recurring implementation challenges in practice. That summary appears in Wikipedia’s article on behavior-driven development.

Common symptoms and cures

Here are the patterns I see most often.

Symptom: Scenarios read like test scripts

Cure: Remove UI clicks, selectors, and workflow trivia from Gherkin. Keep the scenario at the behaviour level.Symptom: Only QA writes scenarios

Cure: Bring product and engineering into authoring discussions. If only one role writes scenarios, BDD turns back into documentation handoff.Symptom: Every scenario breaks after minor UI changes

Cure: Push implementation details into step definitions. Keep feature files stable even when screens evolve.Symptom: Step definitions multiply with tiny wording differences

Cure: Create a shared domain vocabulary and refactor duplicate steps regularly.Symptom: Developers resent the process

Cure: Run fewer, better scenarios. Don’t automate every conversation artifact.

A healthier operating rule

Teams get better outcomes when they use BDD selectively on important behaviours:

- Revenue-critical flows

- Compliance-sensitive actions

- High-risk customer journeys

- Areas with repeated misunderstanding between teams

That scope keeps effort focused where shared understanding has the highest payoff.

If your scenarios are harder to maintain than the feature itself, the problem isn’t BDD. It’s how the team is modeling behaviour.

What good discipline looks like

A productive BDD practice is usually boring in the best sense. The scenarios are short. The wording is stable. Product can read them. Developers can run them. QA trusts the failures.

When that’s true, BDD supports delivery. When it isn’t, the framework becomes theater.

Driving Adoption and Measuring BDD's Real-World Impact

The hardest part of BDD usually isn’t syntax. It’s proving the discipline is worth the effort and introducing it without triggering backlash.

That challenge gets sharper in regulated work. Healthcare, fintech, and similar teams can’t rely on vague claims like “we feel more aligned now.” Leaders need evidence tied to delivery risk, quality, and operational recovery.

How to introduce BDD without chaos

A good rollout is usually narrow and deliberate.

Start with one product area that has clear cross-functional pain. Good candidates include checkout, onboarding, approvals, fraud review, patient workflows, or any flow where requirements often bounce between product, engineering, and QA.

Then use a small adoption pattern:

- Run Three Amigos conversations: Product, engineering, and QA define examples together.

- Choose one tool that fits the stack: Don’t start with multiple frameworks.

- Automate a small set of critical scenarios: Prove the practice on important behaviour, not on everything.

- Review scenario quality regularly: Keep language stable and business-focused.

- Show results in team rituals: Use scenarios in refinement, demos, and release checks.

What to measure

A useful BDD scorecard usually tracks a mix of product and engineering outcomes:

| Metric area | What to look for |

|---|---|

| Requirement quality | Fewer bugs traced back to misunderstood requirements |

| Delivery flow | Less churn during implementation and review |

| Incident response | Faster diagnosis when behaviour regresses |

| Onboarding | New team members understanding key flows more quickly |

| Compliance confidence | Clearer evidence of expected system behaviour |

You don’t need a complicated dashboard on day one. You do need consistency. Pick a few signals and review them over time.

A practical challenge here is that many BDD discussions stay philosophical and never reach ROI. That gap is especially visible in regulated industries. One source discussing this problem notes that teams often need risk-adjusted KPIs, including examples such as a 40% reduction in Mean Time To Resolution (MTTR) observed in some fintech pilots, while also pointing out that most content still lacks strong guidance on BDD ROI measurement. See monday.com’s discussion of BDD and ROI questions.

Because the broader guidance is still thin, teams should build their own measurement discipline around the business risks they face.

Why this matters even more for AI modernization

AI features make behaviour specification harder, not easier.

A standard feature has deterministic logic in many places. An AI-assisted workflow often involves prompts, parameters, model choices, fallback handling, logging, review states, and cost controls. If those behaviours are vague, teams struggle to test them, audit them, and improve them safely.

That’s why BDD is useful beyond classic test automation. The same habit of defining expected behaviour in shared language applies to AI-enabled products. Product teams still need to answer clear questions:

- What should the model do in this context?

- What inputs are allowed?

- What output counts as acceptable?

- What gets logged?

- What happens when the response is incomplete or risky?

Those aren’t abstract AI questions. They’re behaviour questions.

The leadership takeaway

BDD is worth adopting when you treat it as a product alignment system, not a documentation exercise. The payoff comes from fewer misunderstandings, clearer release gates, and a stronger connection between business intent and shipped behaviour.

That’s also why modern teams are starting to apply similar discipline to prompt management and AI operations. Clear behaviour definitions, version control, logging, parameter handling, and cost visibility all support the same goal. Build the right thing, validate it continuously, and make changes safely.

If you're modernizing a product with AI features, or you want tighter control over how AI behaviors are defined and managed, Wonderment Apps can help. Their team works with organizations building scalable web and mobile software, and they’ve developed an administrative prompt management system with a versioned prompt vault, parameter management for internal database access, logging across integrated AI systems, and cost visibility tools. If your team wants a clearer, more auditable way to manage AI behavior inside existing apps, request a demo and see how it fits your product workflow.