The question usually arrives late.

A leadership team has already approved an AI initiative. Product wants personalization in the app. Operations wants workflow automation. Engineering wants something maintainable. Then someone asks the deceptively simple version of the actual problem: what’s the best language for AI programming?

That question matters because language choice affects more than model training. It shapes hiring, delivery speed, infrastructure costs, compliance posture, and how painful your next modernization phase will be. Teams often learn this the hard way. They prototype quickly in one stack, then discover production latency, integration friction, or governance gaps after the demo succeeds.

The code is only part of the system. Once AI enters a real product, teams also need versioned prompts, controlled access to internal data, logging across model calls, and visibility into cumulative spend. Without that management layer, even a smart language choice turns into an expensive tangle.

Navigating the AI Programming Language Maze

A common scenario looks like this. An ecommerce company wants product recommendations in web and mobile apps. The data team prefers Python because the model ecosystem is mature and the iteration cycle is fast. The platform team worries about latency, traffic spikes, and integration with existing services. The CTO wants to avoid building a flashy prototype that becomes technical debt six months later.

That tension is real. The best language for ai programming isn't a universal answer. It depends on whether you're optimizing for experimentation, runtime performance, enterprise integration, browser delivery, or long-term staffing.

The business problem behind the technical question

Business leaders often hear shorthand advice. Python is easy. C++ is fast. Java is enterprise-safe. None of that is wrong, but none of it is enough to make a stack decision.

A useful evaluation asks different questions:

- How fast can your team ship the first valuable version

- How expensive will it be to hire and retain the right engineers

- How well will the AI layer fit your existing architecture

- How painful will maintenance become once prompts, model updates, and logging enter the picture

The wrong language rarely fails on day one. It fails when the prototype meets real users, security reviews, and quarterly roadmap pressure.

Why this choice gets harder after the first launch

AI models are rarely deployed in isolation. They connect AI to customer data, workflows, product surfaces, and analytics. That creates a second layer of complexity beyond the code itself. Prompts need versioning. Parameters need control. Logs need to be centralized. Spend needs to be visible before finance asks why model usage climbed.

That’s why language selection should be treated as part of a broader modernization strategy, not an isolated engineering preference. The language should support the product you’re building. The operating model around it should keep that product reliable.

Meet the Main Contenders for AI Development

A retail team wants an AI assistant in the customer portal, fraud scoring in the backend, and tighter control over cloud spend before traffic scales. At that point, the language question stops being academic. It becomes a hiring, delivery, and maintenance decision.

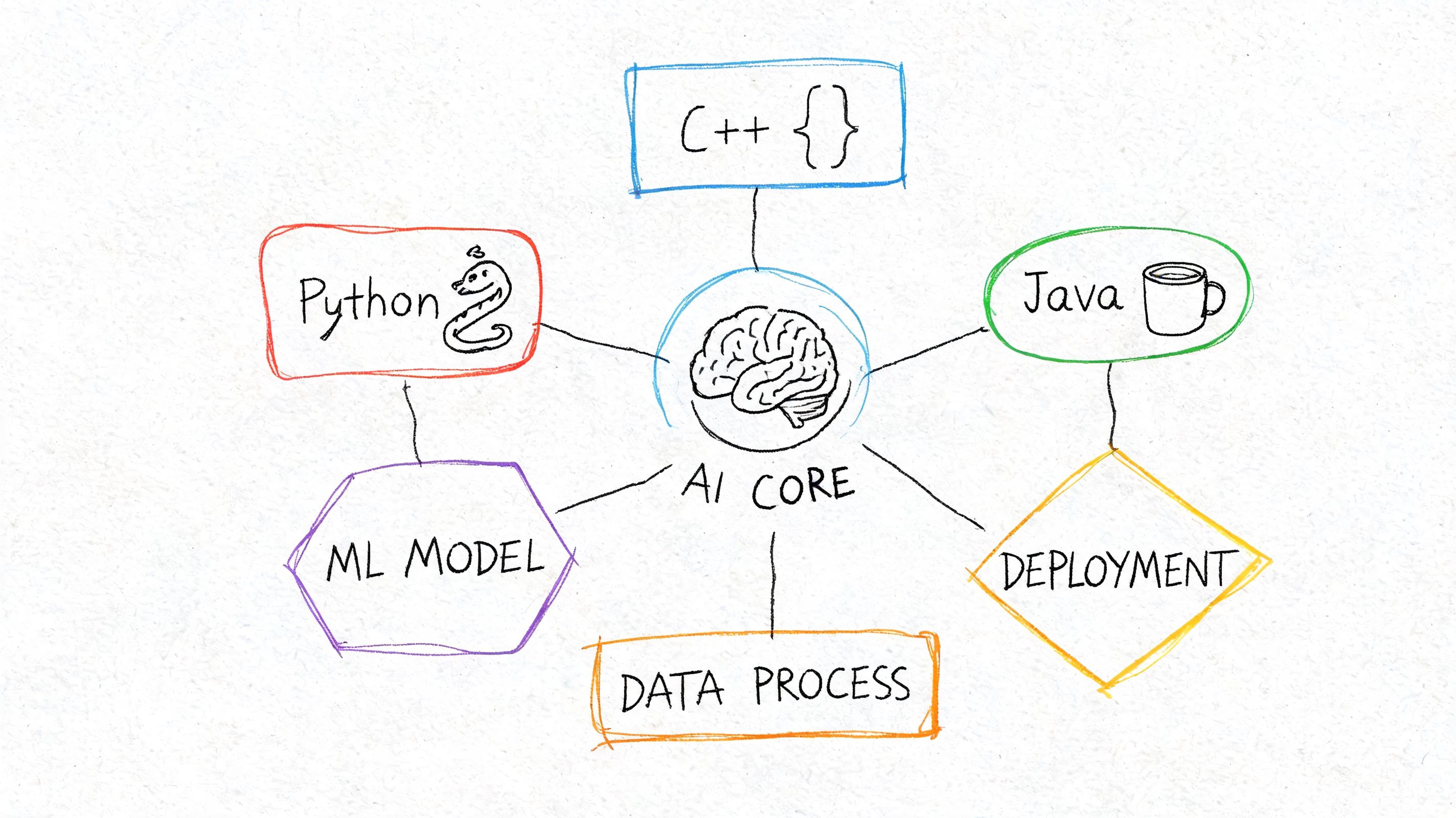

For most companies, a shortlist starts with Python, C++, and Java. Then a smaller group of languages enters the discussion for specific jobs such as browser inference, infrastructure services, or safety-critical components.

Quick comparison

| Language | Best fit | Strength | Main trade-off |

|---|---|---|---|

| Python | Model development, data workflows, rapid product delivery | Broad AI tooling and the easiest path from prototype to usable feature | Slower execution in performance-heavy workloads |

| C++ | Real-time inference, edge systems, robotics, low-latency services | Speed, memory control, and strong hardware access | Higher engineering cost and longer development cycles |

| Java | Enterprise integration, backend AI services, regulated environments | Stable operations, mature tooling, and alignment with existing systems | Less convenient for experimentation and research workflows |

| JavaScript | Browser-based AI experiences | Native web delivery and direct user-side interaction | Limited role in training and advanced model development |

| R | Statistical analysis and research-heavy work | Strong analytical and visualization workflows | Weak fit for large production AI systems |

| Go | AI infrastructure and cloud services | Good for APIs, orchestration, and DevOps-heavy environments | Smaller ecosystem for model development |

| Rust | Systems components needing safety and performance | Memory safety with strong runtime performance | Harder hiring market and slower adoption inside typical product teams |

Python is usually the fastest path to business value

Python stays at the center of AI development because it reduces coordination cost across data science, ML engineering, and product teams. One language can cover data preparation, training, evaluation, orchestration, and service layers well enough to get a product into users' hands quickly.

That speed matters more than benchmark wins in the early phases. Teams can hire from a larger market, onboard faster, and use mature frameworks without building custom plumbing. It also pairs well with the operational side of modern AI work, especially if your team is already building stronger knowledge management practices for AI systems around prompts, context, and model behavior.

C++ earns its place when latency is expensive

C++ is the right call when a few extra milliseconds affect revenue, safety, or device performance. I recommend it for edge inference, robotics, embedded systems, and high-throughput serving paths where infrastructure cost and response time justify the added complexity.

The trade-off is organizational, not just technical. C++ talent is harder to hire for product-oriented AI work, development moves slower, and maintenance requires more specialized engineering discipline. For many companies, that means C++ belongs in the performance-critical layer, not across the whole stack.

Java fits companies that already run on Java

Java works well for AI-enabled services inside enterprises with existing Java platforms, security controls, and integration standards. That choice can reduce rewrite risk, simplify governance, and keep AI features closer to the systems that already run the business.

This is often the practical move in regulated environments. A slightly less convenient ML workflow can be an acceptable trade if it lowers operational risk and avoids creating a disconnected AI team with its own tooling, deployment model, and support burden.

The specialist languages matter in the right slot

Some languages are not primary choices for end-to-end AI delivery, but they solve specific problems well:

- JavaScript for browser-based AI features, lightweight inference, and interactive product experiences

- R for statistical research, modeling exploration, and analyst-led workflows

- Go for cloud services, model gateways, infrastructure tooling, and concurrent backend jobs

- Rust for performance-sensitive components where memory safety and long-term reliability matter

The strongest AI stacks rarely force one language into every layer. They choose a default language for delivery speed, then add specialist tools only where the business case is clear.

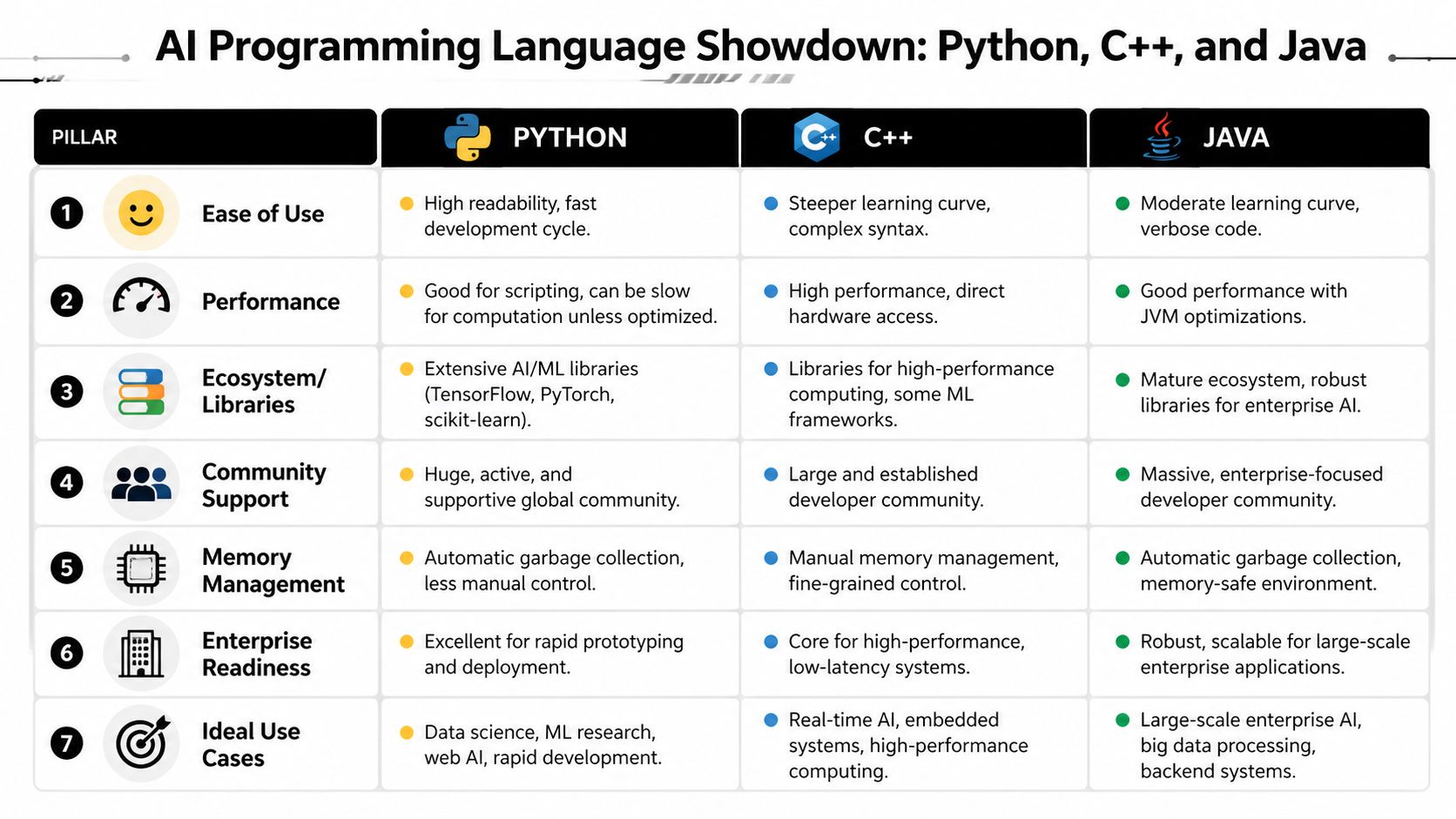

The Seven Pillars of a Smart AI Language Choice

A language decision should survive beyond the pilot. That means evaluating it against operating reality, not just developer preference. The seven pillars below are the ones that usually determine whether an AI stack ages well or turns brittle.

Ecosystem and libraries

The first question is simple. Can your team build the feature without inventing core plumbing?

Python often wins this pillar because so much AI work already depends on established packages for training, data manipulation, experimentation, and deployment. But ecosystem fit isn't just about model libraries. It also includes testing tools, observability, package maturity, and integration patterns with your current backend.

Performance and inference speed

Some teams overweight this. Others ignore it until users notice.

If you're serving recommendations inside a retail app, analyzing fraud signals in real time, or running edge inference, language-level performance affects user experience and infrastructure design. As SitePoint’s discussion of AI language selection by deployment context notes, language choice varies dramatically by deployment pattern. Python has computational speed limitations in performance-intensive scenarios, while C++ is often a better fit there, and Go plays an important role in cloud infrastructure through Kubernetes and Docker.

Prototyping versus production

Fast starts are valuable. So is surviving launch.

A language can be excellent for experimentation and awkward in production. Another can be painful for research but excellent for stable services. Teams that separate those two phases make better decisions.

- Prototype stack: Optimize for learning speed, model iteration, and lower engineering friction.

- Production stack: Optimize for latency, service boundaries, observability, and controlled releases.

- Bridge plan: Decide early whether the prototype language will remain in production or hand off to another runtime.

Deployment and MLOps tooling

AI products don't stop at code completion. They need packaging, monitoring, rollback paths, reproducibility, and runtime management. Deployment fit includes container support, CI/CD maturity, model serving patterns, and how easily teams can operate mixed systems.

If your organization is still building AI fluency, a good next read is this overview of knowledge in artificial intelligence, especially for teams aligning product strategy with implementation realities.

Hiring and maintenance costs

This pillar is where many executive decisions become obvious.

A language with broad hiring availability can reduce delivery risk. A language that only a few specialists want to maintain can raise long-term cost even if it solves a narrow technical problem elegantly. Maintenance also includes onboarding, code readability, testing culture, and how much institutional knowledge the stack requires.

Practical rule: If a language choice improves benchmark performance but narrows your hiring pool and slows maintenance, the business case may still be weak.

Security and compliance

Regulated industries care about more than model quality. They need auditability, dependable service behavior, and integration with existing controls. A language that aligns with enterprise security practices may be the better business decision even if it isn't the preferred research environment.

AI-assisted development productivity

One more pillar has become impossible to ignore. How well does the language work with AI coding tools?

Code generation, test writing, refactoring, and maintenance are increasingly part of delivery speed. Language choice now affects not only runtime behavior, but also how effectively engineers can use coding agents without creating brittle output.

A Deep Dive Comparison of Python C++ and Java

A client asks for one language choice for an AI product. The product team wants Python. The platform team prefers Java because the company already runs core services on the JVM. The CTO is worried about inference latency and asks whether C++ should be in the plan from day one.

That is the real decision. It is rarely about benchmark bragging rights alone. Language choice affects hiring speed, cloud cost, deployment risk, model iteration time, and how expensive the system becomes to maintain two years from now.

Python for fast iteration and the broadest AI talent pool

Python remains the default starting point for commercial AI work because it reduces delivery friction across the full model lifecycle. Research, data preparation, experimentation, training, evaluation, and early API development all happen faster when the team uses the language that dominates modern ML tooling.

The practical advantage is not just syntax. It is the surrounding market.

- Library coverage: PyTorch, TensorFlow, scikit-learn, Pandas, NumPy, and most LLM tooling reach Python first.

- Hiring range: You can staff Python across data science, ML engineering, analytics, and many backend roles.

- Vendor compatibility: Managed AI platforms, notebooks, orchestration tools, and hosted inference products usually treat Python as the first-class path.

- Lower rewrite pressure early: Teams can validate whether the model creates business value before investing in lower-level optimization.

I recommend Python first when the main risk is product discovery. That includes new recommendation systems, internal copilots, document extraction workflows, and most first-generation AI features.

Its weakness is clear. Python is often not the final answer for the hottest runtime path. Teams still get strong performance by relying on optimized libraries underneath, but once latency, memory use, or hardware control become the product constraint, Python stops being enough by itself.

Python is the fastest way to learn whether the AI feature should exist at all.

C++ for products where latency and throughput shape revenue

C++ earns its place when runtime efficiency affects user experience, infrastructure cost, or system safety. That is common in robotics, embedded AI, high-volume inference, computer vision, and platforms serving predictions at large scale.

The trade-off is organizational, not just technical. C++ gives engineers direct control over memory and execution. It also raises the bar for hiring, code review, debugging, and long-term maintenance. A team that reaches for C++ too early can slow product learning and increase staffing risk. A team that avoids it when low latency matters can end up paying for oversized infrastructure or missing product requirements.

In practice, I usually see C++ justified in three cases:

- Inference speed is part of the product promise

- Hardware constraints are tight

- The system runs at enough scale that efficiency changes unit economics

Those are strong reasons. “It is faster” on its own is not.

Java for enterprise continuity and lower integration risk

Java is often the most underrated option in AI programs because executives frame the decision as model development instead of system ownership. Many companies do not need the language that feels best in a notebook. They need the language that fits identity management, audit logging, transaction flows, service governance, and existing operations teams.

That is where Java wins.

Java works well when AI is one capability inside a larger business platform. A common pattern is training and experimentation in Python, then serving, orchestrating, or embedding that intelligence inside Java services already used by operations, compliance, billing, or customer-facing applications. For companies with existing JVM investments, this can cut integration time and reduce change-management overhead.

Java also fits naturally with teams already running distributed data systems on the JVM. If your platform strategy includes Spark-based data processing, this overview of Scala, Hadoop, and Spark for large-scale data engineering is a useful reference point because language choice in AI often follows the data platform that already exists.

Comparing the three where business impact shows up

Ecosystem and developer workflow

Python leads for model development because the examples, libraries, and community knowledge are hard to match. New AI frameworks usually become usable in Python first. That shortens evaluation cycles and reduces experimentation cost.

Java has a mature tooling story for enterprise services, testing, observability, and governance. C++ remains strong for systems programming and framework internals, but the day-to-day workflow is heavier and usually slower for product iteration.

| Pillar | Python | C++ | Java |

|---|---|---|---|

| Ecosystem | Best fit for model development, data work, and AI experimentation | Best fit for low-level optimization and performance-sensitive components | Best fit for enterprise services, governance, and large existing JVM estates |

| Learning curve | Lowest | Highest | Moderate |

| Typical first use | Prototyping, model training, evaluation | Runtime hotspots, embedded systems, high-performance inference | Integration, orchestration, APIs, business workflows |

Runtime performance and infrastructure cost

Here, teams make expensive mistakes.

If a system serves millions of inferences, small runtime differences become cloud spend differences. If a product depends on real-time interaction, latency becomes a UX issue, not an engineering preference. C++ deserves serious consideration early in those environments because late rewrites are expensive and usually happen under pressure.

Java sits in a useful middle ground. It offers predictable production behavior and fits well with service-oriented architectures. Python remains highly productive, but compute-heavy paths often need compiled extensions, external serving layers, or selective rewrites to hit production targets.

Prototype speed versus production durability

Python is usually the fastest path to a working model. That matters because many AI ideas fail at the business stage, not the engineering stage. Fast feedback protects budget.

Java becomes more attractive once the work shifts from “can we build this” to “can we run this safely inside a real business system.” C++ becomes attractive when production constraints are already known and difficult to change later.

A mature architecture often uses all three selectively:

- Python for experimentation, feature engineering, training, and evaluation

- Java for workflow integration, policy enforcement, APIs, and enterprise service layers

- C++ for the narrow parts of the system where runtime efficiency justifies specialized engineering effort

That is usually a sign of discipline, not stack sprawl.

Hiring, onboarding, and maintenance cost

Python is the safest staffing choice for most companies. The hiring market is broad, onboarding is faster, and cross-functional collaboration is easier. That lowers delivery risk.

Java can be the cheaper long-term decision in organizations that already have strong JVM teams, established service templates, and mature security processes. Reusing that internal capability often beats introducing a new stack for marginal technical elegance.

C++ should be chosen with intent. Strong C++ engineers are harder to hire and harder to replace. If the business benefit is real, the cost is justified. If the product does not need that level of control, the maintenance burden lingers long after the architecture review ends.

Compliance, governance, and operational fit

Regulated environments usually reward consistency more than novelty. Java often performs well here because enterprise teams already know how to monitor it, secure it, and document it. Python can still work very well, especially behind clearly defined service boundaries, but it benefits from stronger platform discipline in production. C++ fits specialized systems where predictable performance and low-level control matter more than broad developer access.

AI-assisted development productivity

AI coding tools change the economics of language choice at the team level. Languages with clear conventions, large public code corpora, and mainstream tooling tend to work better with code generation, test scaffolding, and refactoring assistance.

Python benefits directly from that trend. So does Java. If your product also includes browser-side AI features or front-end integration work, the practical guide for JavaScript AI is useful context because many modern AI systems split responsibilities across backend model services and user-facing application layers.

What usually works in practice

Python is the best first choice for most AI initiatives because it reduces time to insight and widens the hiring pool.

Java is often the best operational choice for enterprises that need AI to fit existing systems, controls, and service teams.

C++ is the right investment when performance affects revenue, safety, or platform cost in a measurable way.

The wrong move is forcing one language to do every job. The better approach is to decide where the business needs learning speed, where it needs operational continuity, and where it needs raw execution efficiency, then assign the language accordingly.

Specialized and Emerging AI Programming Languages

A team can make the right call on Python, Java, or C++ and still overspend on the overall AI stack. That usually happens when the product needs research workflows, browser delivery, data infrastructure, or safety-critical services that the core language choice does not cover well on its own.

That is where specialized languages earn their place. Not as defaults, but as targeted tools that reduce delivery risk, hiring friction, or operating cost in specific parts of the system.

R and JavaScript solve different business problems

R still earns a spot in statistically intensive environments. I see it work best with research groups, data science teams, and internal analytics functions that care more about modeling depth and exploratory work than long-term application engineering. The trade-off is predictable. Once the same code needs CI pipelines, service contracts, monitoring, and a broader hiring pool, R becomes harder to standardize.

JavaScript matters for the opposite reason. AI features increasingly live inside the product experience itself, including browser-side inference, copilots, search interactions, and personalization logic that has to respond close to the user. In those cases, JavaScript can cut deployment complexity and reduce handoffs between front-end and ML teams.

If your roadmap includes browser-based AI features, this practical guide for JavaScript AI is useful because it focuses on product implementation patterns rather than model experimentation alone.

Go, Rust, and Scala show up around the model, not just inside it

Go usually enters AI programs through platform engineering. It is a good fit for APIs, orchestration services, model gateways, job runners, and infrastructure tooling that needs to stay easy to operate with a midsize team. That matters for cost. A language that keeps services readable and operations predictable often saves more money than a small runtime gain in model code.

Rust earns attention when memory safety, latency discipline, or constrained environments matter enough to justify a steeper learning curve. Teams use it for serving components, streaming paths, and security-sensitive infrastructure where production failure is expensive. The trade-off is hiring. Rust talent is harder to find, and onboarding takes longer than with Go or Python.

Scala still appears in AI programs with large data estates on the JVM. If your company already runs Spark-heavy pipelines or enterprise data platforms, it can be the practical bridge between existing infrastructure and newer ML systems. This overview of Scala, Hadoop, and Spark for distributed data platforms is useful context for teams deciding whether to modernize around the JVM or move more of the stack elsewhere.

Emerging languages matter if they lower maintenance cost

Newer or niche languages should be evaluated with more discipline than enthusiasm. The question is not whether they are interesting. The question is whether they reduce a specific business constraint enough to offset narrower hiring markets, fewer libraries, and more custom platform work.

AI-assisted coding changes that calculation somewhat. As noted earlier, some languages perform better than others with code generation and refactoring tools. That can improve team throughput, especially in smaller engineering organizations. It still does not erase the basics. You need maintainers, production tooling, and a realistic recruiting plan.

A practical read on specialized languages looks like this:

- R fits research-heavy and analytics-heavy work where statistical depth matters more than production standardization.

- JavaScript fits user-facing AI features, especially when browser delivery shortens time to market.

- Go fits platform and MLOps components where operational simplicity affects team cost.

- Rust fits high-assurance systems where safety and performance justify a tougher hiring profile.

- Scala fits organizations with existing Spark or JVM data infrastructure that want to modernize without replacing everything at once.

A specialized language is a good choice when it removes a clear bottleneck in delivery, operations, or staffing. If it only adds novelty, it adds cost.

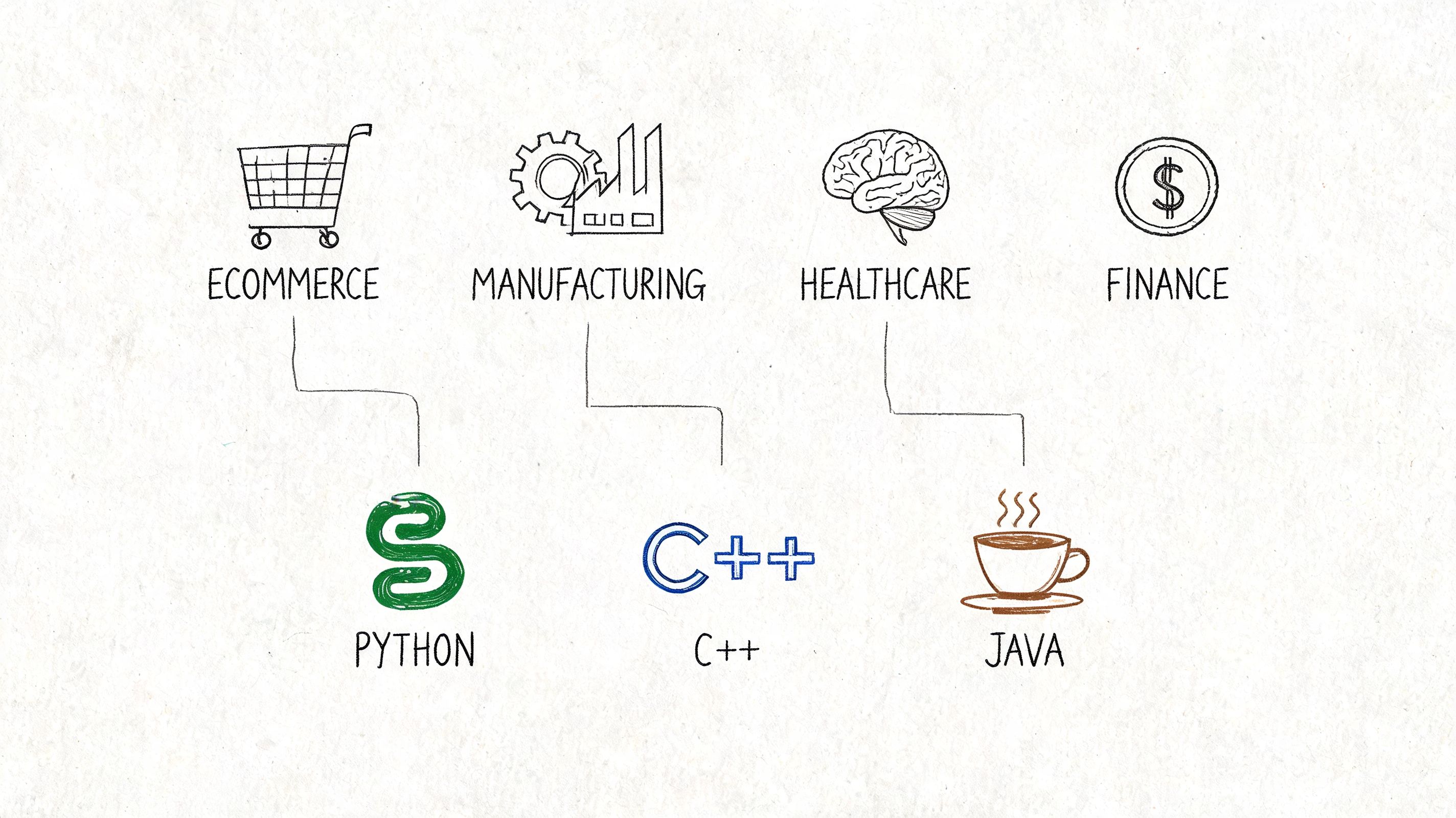

Industry-Specific AI Language Recommendations

A retail app, a fraud platform, and a healthcare portal don't need the same stack. Industry context changes the answer fast.

Ecommerce and retail

For ecommerce, a Python-first approach is usually the best starting point. Personalization, recommendation models, search tuning, catalog enrichment, and anomaly detection all benefit from Python’s model ecosystem and fast iteration loop.

For production serving, many teams benefit from a mixed architecture. Python handles model work. A faster serving layer or an established backend stack handles customer-facing delivery where latency matters.

Fintech

Fintech should be more conservative. Real-time decisioning, security requirements, and legacy integration pressures often make Java or C++ the more pragmatic center of gravity for production systems.

Staffing and existing architecture matter. As discussed in Coding Temple’s review of AI engineer language choices, hiring market realities are critical, and for fintech organizations already operating on Java or .NET foundations, choosing Java to extend existing systems is often more practical than starting a greenfield Python project.

Healthcare and wellness

Healthcare usually needs two things at once. Research-friendly AI development and production systems that are auditable, stable, and compatible with strict operational processes.

That often leads to a split recommendation:

- Python for research, modeling, and analytics-heavy work

- Java for integration with existing systems and operational reliability

- C++ only where simulation or runtime performance clearly demands it

For legal and compliance-adjacent teams thinking about specialized AI workflows, this resource on comparing AI legal assistant tools is worth reviewing because it highlights the governance mindset that also applies in healthcare procurement.

Media, SaaS, government, and nonprofits

Media platforms often need high-throughput recommendation engines and content-heavy app experiences. A Python-plus-performance-runtime model works well there, especially when user-facing scale becomes a product issue rather than just a traffic issue.

SaaS platforms vary. Internal AI features can live comfortably in Python-backed services. Customer-facing, integrated workflow automation may fit better inside the company’s existing backend language.

Government agencies and nonprofits usually benefit from Python because the learning curve is gentler and staffing flexibility matters. Simpler onboarding, broad community support, and easier experimentation make it a practical modernization language when internal technical depth is uneven.

The best language in your industry is usually the one your team can hire for, integrate safely, and still maintain two years after launch.

Unify Your AI Stack with Smart Management Tooling

A team can pick the right language and still end up with an expensive AI product. I see this when a company ships a promising Python prototype, adds a Java service for integrations, then bolts on vendor APIs and internal data connectors. Six months later, nobody can explain which prompt version is live, why costs jumped, or who approved a change to model access.

The management layer most teams forget

The language decision matters. The operating model matters more once the product reaches real users.

A maintainable AI stack needs control around prompts, models, environments, and data access. Without that layer, every new feature adds coordination cost across engineering, product, security, and finance. That is where AI projects start to slow down, even when model quality is good.

In practice, the minimum tooling usually includes:

- Prompt versioning and audit history so teams can compare outputs, review changes, and roll back safely

- Parameter and access controls so model settings, internal tools, and database permissions stay consistent across environments

- Centralized logging so engineers can trace failures across application code, model calls, and downstream systems

- Cost tracking by feature or team so usage can be tied to business value instead of becoming a monthly surprise

These controls are language-agnostic. A Python-first ML team needs them. A Java platform team integrating AI into an existing product needs them. A mixed stack with performance-critical components needs them even more, because coordination overhead rises as the architecture gets more fragmented.

Why this matters more as products scale

By the time an AI feature reaches production, the stack is rarely pure. The training workflow may live in Python, the application backend may stay in Java or TypeScript, and lower-level inference or serving components may rely on faster runtime layers under the hood. The primary architectural risk is not that one of those choices is wrong. It is that nobody owns the control plane across all of them.

That gap shows up in hiring and maintenance first. Specialists can build isolated pieces. Fewer teams can support the full system over time, document it clearly, and hand it off without creating key-person risk. Business leaders feel that as slower releases, harder audits, and rising vendor spend.

Environment management is part of that picture too. Teams that standardize packaging, dependencies, and onboarding reduce setup time and cut avoidable production drift. For organizations deciding how to support data scientists and application engineers in the same workflow, this guide on Anaconda vs Python for AI development environments is a useful reference.

A smart AI architecture assigns each language a clear job, then puts one management layer above the whole system. That is what keeps experimentation fast, operations predictable, and modernization costs under control.

If you're evaluating the best language for ai programming and want help turning that decision into a scalable product strategy, Wonderment Apps can help. Their team works with organizations modernizing web and mobile software with AI, and they’ve built an administrative system for prompt versioning, parameter management, unified logging, and cumulative cost tracking. If your challenge is bigger than picking Python, Java, or C++, and it usually is, they’re a strong partner to talk to.