You're probably in a familiar spot. The roadmap says AI-assisted search, better recommendations, smarter ops tooling, or faster back-office automation. Your team knows Python is the most practical path for a lot of that work. Then hiring reality shows up. Good Python engineers are expensive, the strongest candidates disappear fast, and your internal team still has to keep the current product stable.

That's why so many companies end up evaluating outsourcing python development. The appeal isn't just lower cost. It's faster access to specialized capability in areas like Django, Flask, FastAPI, data pipelines, and AI integration. The hard part is that many first-time buyers treat outsourcing as a staffing problem when it's really an operating model decision.

Modern Python projects also carry a second layer of complexity that didn't exist a few years ago. If the outsourced team is building AI-powered features, you're not only shipping code. You're also managing prompts, model settings, provider changes, logs, and token spend. Teams that ignore that governance layer often get a working demo and a messy production system.

The Python Paradox and Your AI Ambition

Python sits in a strange sweet spot. It's approachable enough for rapid delivery and powerful enough to support serious products in ecommerce, fintech, healthcare, SaaS, and media. That's why leadership teams keep choosing it for modernization work, especially when AI features are part of the brief.

The economic pull is strong. The global IT outsourcing market is projected to hit $541 billion by the end of 2025, and a senior Python developer in San Francisco can cost over $250,000 annually with overheads, while outsourcing can reduce those costs by 40 to 70 percent and deliver 2.8x ROI within 18 months through faster development cycles, according to this 2025 Python outsourcing guide.

Why Python gets outsourced so often

When teams outsource Python work well, they usually need one of three things:

- A specialist stack quickly. Think Django for internal platforms, FastAPI for service layers, Pandas and NumPy for analytics, or TensorFlow-adjacent work for applied AI.

- A focused delivery team. Internal engineers are often tied up on platform reliability, compliance reviews, and support obligations.

- A bridge to modernization. Legacy systems don't disappear because an executive says “let's use AI.” Somebody still has to connect new services to old databases, authentication layers, and brittle admin workflows.

That combination makes Python unusually well suited to outsourced delivery. It covers web apps, APIs, data engineering, automations, and AI-adjacent integrations without forcing a fragmented toolchain from day one.

Outsourcing python development works best when you buy expertise, not just capacity.

Where leaders get blindsided

The first surprise is usually governance, not code quality. A vendor can build a recommendation engine, support chatbot, content workflow, or anomaly detection service that looks great in staging. Then production introduces prompt drift, unclear approval rights, unclear model fallbacks, and no reliable way to see what the AI layer is doing.

For AI-enabled Python systems, the administration layer matters as much as the application layer. Teams need a practical way to handle prompt versioning, parameter control, centralized logging, and cost visibility. Without that, every release turns into archaeology.

A lot of first-time buyers also underestimate decision latency. If your outsourced team has to wait days for product answers, missing credentials, or compliance clarification, the promised speed advantage disappears. Python isn't the bottleneck in those cases. Operating discipline is.

Laying the Foundation for Outsourcing Success

Most failed outsourcing efforts don't start with bad code. They start with vague intent. “We need a Python team” isn't a plan. It's a purchasing impulse.

Before you talk to vendors, define what the project must achieve for the business and what constraints can't be negotiated away. This matters even more in regulated environments. A 2025 Clutch survey found that 42% of outsourcing failures in regulated industries stemmed from data breaches or non-compliance, and many teams still skip vendor checks for ISO 27001 certification or SOC 2 audits, as noted in this analysis of Python outsourcing risk.

Start with outcomes, not features

A strong internal brief answers a few blunt questions:

What business problem are you solving

“Improve personalization” is too fuzzy. “Reduce manual support load with AI-assisted workflows” is much better. So is “replace a fragile internal reporting tool with a Python service layer and clean admin UI.”

What must exist at launch

Separate launch requirements from future ideas. If you don't, vendors will price ambition, not reality.

What does success look like operationally

Decide who owns uptime, support escalation, model/provider changes, and post-launch enhancements.

A clean way to pressure-test your thinking is to compare whether you need true outsourcing or a more embedded team model. If you're still sorting that out, Wonderment's breakdown of outstaffing vs outsourcing strategy is a useful framing tool.

Document the system you actually have

Many buyers hand vendors a future-state wish list and almost nothing about the current stack. That creates avoidable confusion.

Capture these items before vendor conversations begin:

- Architecture reality. Current services, core dependencies, auth flow, data stores, admin tools, and brittle integrations.

- Operational constraints. Release windows, audit requirements, incident process, approval gates, procurement limits.

- Security boundaries. What data can leave which environments, who can access production, and how secrets are managed.

- AI boundaries. Allowed model providers, retention rules, prompt review process, and logging expectations.

Regulated teams need a harder filter

If you work in healthcare, fintech, ecommerce payments, or any environment with customer-sensitive data, vendor selection starts with trust architecture. Don't bolt it on later.

Use this pre-qualification checklist:

| Area | What to verify |

|---|---|

| Compliance posture | Experience working with HIPAA, PCI-DSS, GDPR, or your equivalent obligations |

| Security controls | ISO 27001, SOC 2, secure SDLC practices, access control discipline |

| Data handling | Clear rules for storage, masking, test data, and logging |

| AI governance | Approval process for prompts, provider use, audit visibility, fallback behavior |

| IP protection | Contract language on code ownership, repositories, credentials, and artifacts |

Practical rule: If a vendor answers compliance questions with general reassurance instead of process detail, keep looking.

Write the brief your future self will thank you for

A good internal brief is short enough to read and detailed enough to expose risk. It usually includes business goals, technical scope, integration dependencies, security expectations, compliance obligations, internal stakeholders, and decision rights.

That document won't make the project easy. It will make it legible. That's a big difference.

Choosing Your Vendor and Engagement Model

The wrong engagement model can sink a good project before the first sprint review. Companies often blame the vendor when the actual issue is a mismatch between the work and the commercial structure.

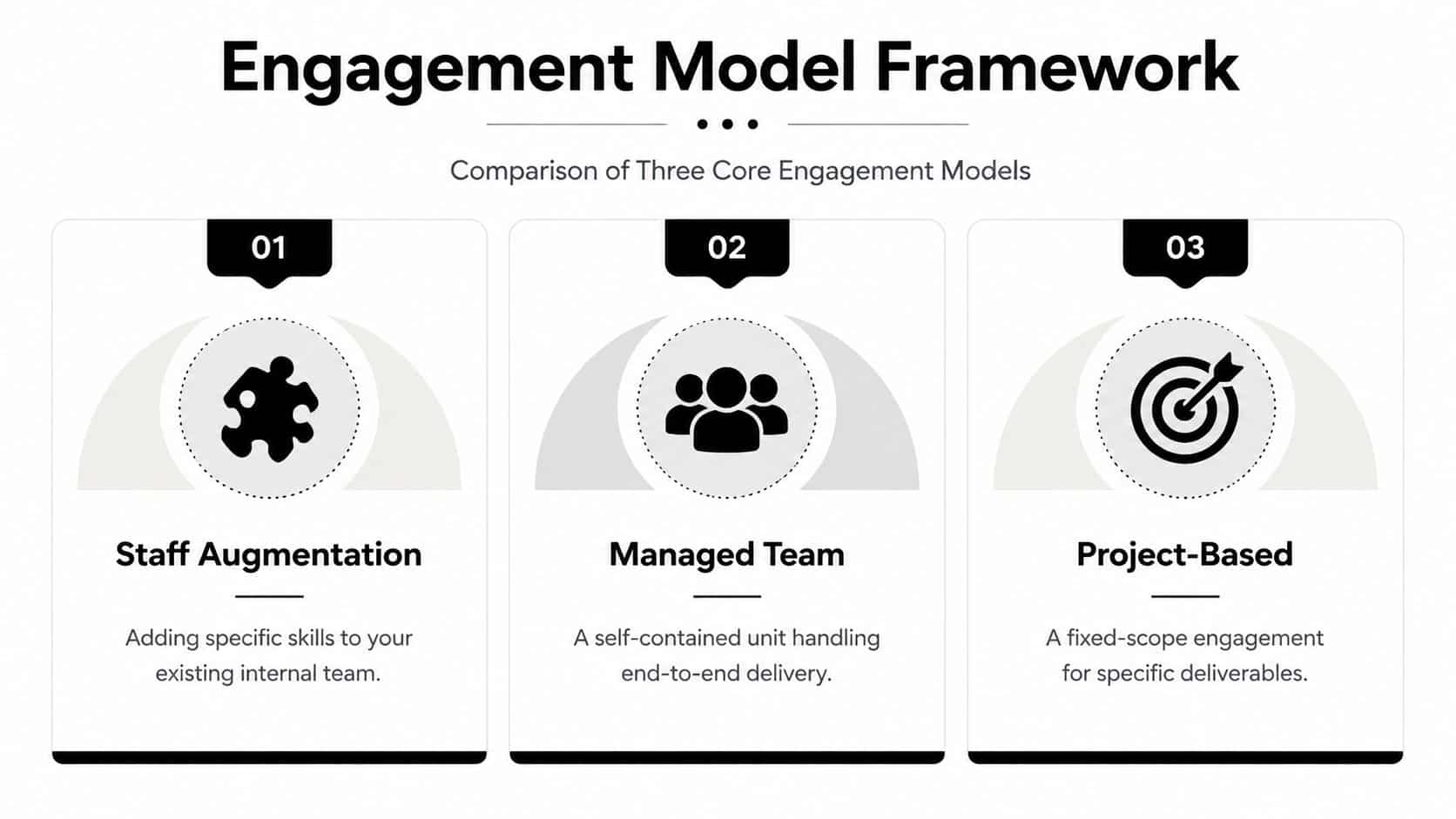

Three common models and when they fit

| Model | Best for | Strength | Watch-out |

|---|---|---|---|

| Staff augmentation | Teams with strong internal product and engineering leadership | High control | You still manage day-to-day delivery |

| Managed team | Companies that want a dedicated pod with clearer delivery ownership | Better autonomy | Requires strong governance and interfaces |

| Project-based | Well-defined work with stable scope | Easier procurement | Scope drift gets expensive fast |

If your roadmap is active and your internal team can lead architecture, staff augmentation can work well. If you need a self-contained delivery unit with PM, QA, and engineering working in sync, managed teams are usually healthier. Fixed project work can succeed, but only when the boundaries are strictly stable.

For companies evaluating regional talent pools, Hire Latin American Developers can be a practical reference point for nearshore sourcing, especially when you want timezone overlap without building a full recruiting motion from scratch.

How to vet Python depth

Portfolio screenshots won't tell you whether a team can build a durable Python system. Ask technical questions that expose engineering judgment.

A key issue is Python performance under load. The Global Interpreter Lock can bottleneck performance in multi-core systems, and experienced teams respond by profiling with cProfile, rewriting hotspots in Cython for up to 100x speedup, and using multiprocessing to scale CPU-bound workloads. The same source notes that agile outsourcing processes yield 28% higher project success rates, according to this engineering-focused review of outsourced Python delivery.

Ask questions like these in technical interviews:

- Where do you expect Python to struggle in this architecture

- How do you profile CPU-bound bottlenecks before optimization

- When would you choose FastAPI over Django or Flask

- How do you handle async jobs, queues, and retries

- What's your approach to test isolation for external AI providers

- How do you prevent prompt changes from breaking production behavior

A strong vendor answers with trade-offs, not slogans.

What good answers sound like

Good partners don't say “Python scales fine” and stop there. They talk about profiling first, then choosing the least risky optimization path. They know when to use multiprocessing, when to separate workloads into services, and when to leave a part of the system outside Python entirely.

They also understand product realities. For example, a team building AI-enhanced ecommerce flows might use FastAPI for service endpoints, Celery or another queue pattern for background work, and a clear logging and approval layer around prompts and model calls. That answer signals systems thinking.

If you need a broader vendor-evaluation checklist, Wonderment's guide on how to pick the best offshore software development company is worth reviewing before you start finalist interviews.

A candid warning about demos

A slick prototype can hide weak fundamentals. Python makes it easy to produce convincing demos quickly. That's one reason teams love it. It's also why buyers need to ask how the same solution will be tested, monitored, secured, and maintained.

The question isn't “Can they build this?” It's “Can they keep it understandable when the product evolves?”

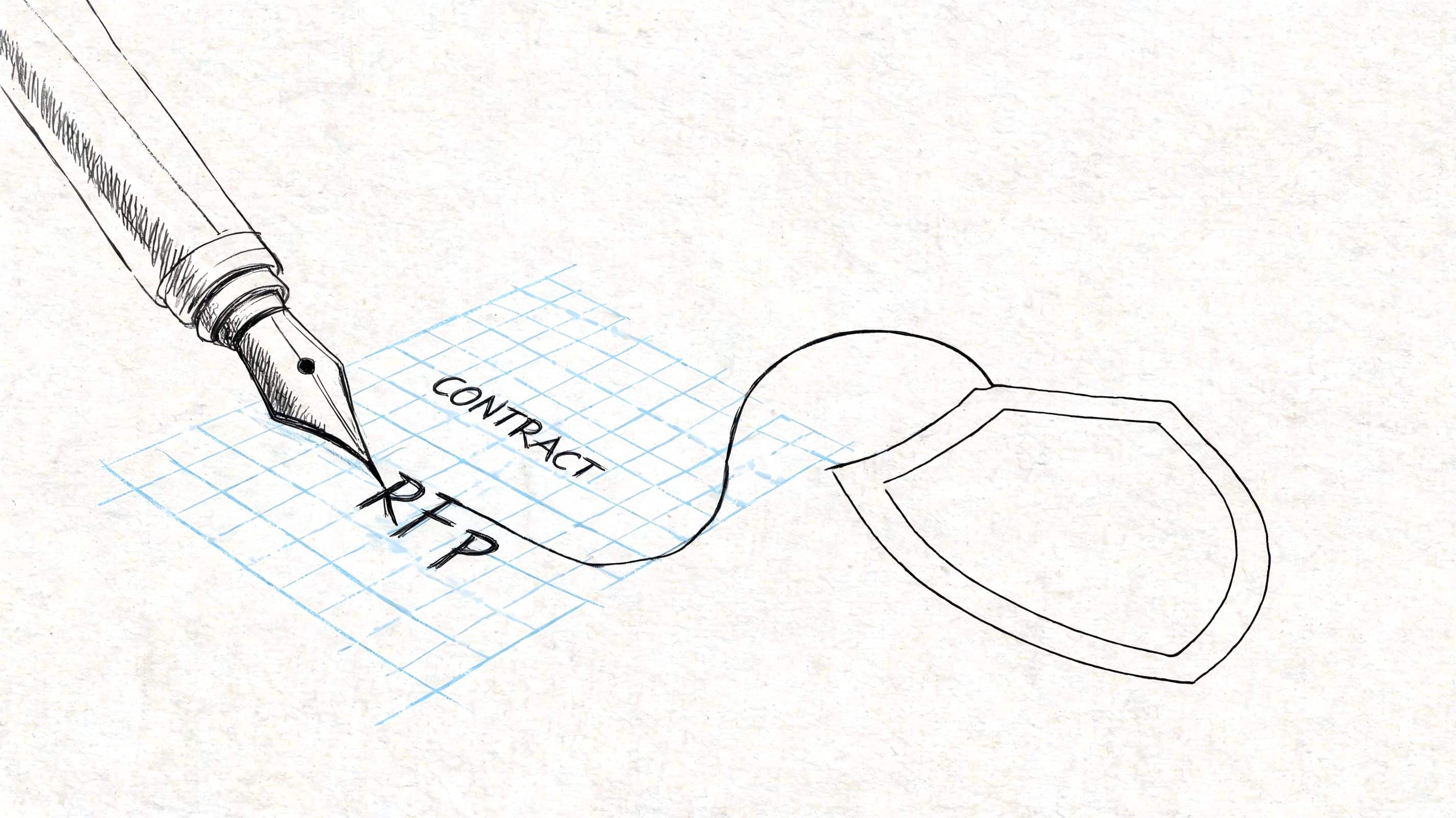

Crafting a Bulletproof RFP and Contract

An RFP should help you compare thinking, not collect polished sales copy. A contract should define how the relationship behaves when reality gets messy.

The biggest risk is scope drift dressed up as collaboration. Unclear scope and evolving requirements are responsible for 40% of outsourcing failures and cause delays in 70% of IT projects, according to a summary of the 2024 Statista survey and outsourcing pitfalls. That's why the contract and the RFP need to work together.

What to put in the RFP

A useful RFP gives vendors enough detail to respond concretely without writing the entire solution for them. Include:

- Business context. Why the project exists and what operational pain it should remove.

- Current environment. Existing systems, integrations, data concerns, and known technical constraints.

- Required capabilities. Python frameworks, API work, admin interfaces, AI integration, testing standards, cloud expectations.

- Non-negotiables. Security reviews, compliance requirements, code ownership, documentation, and support expectations.

- Response structure. Ask vendors to describe approach, team composition, delivery process, risk register, and assumptions.

If you want a practical companion resource for writing the underlying technical brief, Tekk.coach's guide is a solid reference for improving specification quality before the RFP goes out.

The contract clauses that save projects

Many first-time buyers get too casual at this stage. A statement of work isn't enough by itself.

Focus on these clauses:

Intellectual property

State clearly who owns code, prompts, documentation, configurations, test artifacts, and deployment assets.

Acceptance criteria

Define what “done” means for each milestone. Include testing expectations, documentation, and defect handling.

Security obligations

Spell out access control, incident reporting, credential handling, data environments, and audit expectations.

Change control

Require written treatment of new requests. Clarify how scope changes are reviewed, estimated, approved, and scheduled.

Exit and transition

Include repository access, handoff expectations, knowledge transfer, and support during transition.

Practical rule: If your change process lives in Slack messages and good intentions, scope will drift and nobody will agree on why.

Ask vendors to show their judgment

The best RFP responses don't just repeat your language. They challenge assumptions, flag hidden dependencies, and identify risk. If a vendor says every requested feature fits neatly into the initial timeline with no caveats, that's not confidence. It's often a warning sign.

A useful proposal usually includes a few phrases buyers should welcome: “dependency,” “assumption,” “trade-off,” “validation,” and “phase.” Those words mean the team has done this before.

Mastering Onboarding and Project Governance

The risky moment in an outsourced Python project is not contract signature or first deployment. It is week two, when the vendor has repo access, the backlog is live, and nobody is fully sure who can approve an API change, a prompt update, or a production rollback.

That gap creates expensive confusion. Python teams move fast once they have context, but speed without operating rules produces rework, security exceptions, and AI features nobody wants to own in production.

Good onboarding turns an external team into a delivery unit that can make sound decisions without constant supervision. That means giving them more than credentials and tickets. They need business goals, architecture constraints, release habits, compliance boundaries, service-level expectations, and a clear map of who decides what.

I like to treat the first two weeks as governance setup with code delivery attached, not the other way around.

Set the operating rhythm early

A working cadence should surface uncertainty before it becomes delay. The point is not ceremony. The point is fast escalation, traceable decisions, and fewer private interpretations of scope.

A practical pattern looks like this:

- Daily standups for blockers, handoffs, and dependency calls

- Weekly delivery reviews for status, risks, staffing changes, and pending product decisions

- Bi-weekly demos against working software in a real environment

- Architecture checkpoints for integration choices, performance concerns, and security review

- Release readiness reviews for test evidence, rollback plans, and production approvals

Industry research from Deloitte found that organizations use outsourcing heavily for IT and software work, and successful partnerships increasingly treat providers as part of the operating model rather than as isolated contractors, as outlined in Deloitte's Global Outsourcing Survey.

That shift matters more with Python and AI work. A vendor can build features quickly. The harder part is making sure those features can be changed safely six months later by your team, their team, or a replacement team.

Govern the AI layer like application code

AI modernization should be built into onboarding from day one. If you bolt it on later, you inherit a second system of undocumented behavior sitting beside your Python codebase.

Prompt text changes outputs. Retrieval rules change relevance. Model selection changes latency, cost, and privacy exposure. Temperature and token settings change user experience. If those decisions live in chat threads or engineer memory, your outsourced team can ship something that works in a demo and becomes unstable in operations.

Set clear ownership for:

- Prompt versioning tied to releases and change approval

- Model and parameter controls by environment

- Logging and traceability for prompts, outputs, failures, and human overrides

- Cost monitoring at feature level, not only at monthly invoice level

- Fallback behavior when a model degrades, rate-limits, or returns unusable output

This is the difference between outsourcing for labor savings and outsourcing to improve capability. Teams that govern the AI layer early get a codebase that can absorb model changes, policy changes, and vendor changes without a rewrite.

Documentation has to support decisions

Handoff problems usually start long before handoff. They start when nobody records why a queue was introduced, why a prompt was changed, why one service account has broader access, or why the team rejected a simpler integration path.

Document the decisions that future engineers will question. Keep architecture notes, release checklists, AI operating rules, support runbooks, and known failure modes in one maintained system. If your team is tightening that discipline, this knowledge base management guide for 2026 is a useful companion resource.

Distributed teams also need explicit working norms. Response windows, escalation paths, overlap hours, review expectations, and meeting ownership should be written down, not assumed. Wonderment's advice on best practices when working with offshore teams is worth sharing with both your internal leads and the vendor team.

What strong onboarding usually includes

The strongest outsourced teams ask for context that first-time buyers often forget to provide.

They want to know how incidents are handled, who can accept technical debt, which systems are politically sensitive, what audit trail the security team expects, and which AI use cases are allowed to touch customer data. Those answers shape design choices from the first sprint.

A useful onboarding package usually includes:

- System maps showing services, dependencies, and data flows

- Access rules for repositories, cloud environments, secrets, and production logs

- Delivery rules for branching, code review, testing, and release approval

- AI governance rules for prompt changes, model usage, logging, and human review

- Escalation paths for blockers, security questions, and scope conflicts

Your outsourced Python team should never have to guess who approves a schema change, a prompt edit, or an emergency patch.

That clarity is what future-proofs the engagement. It reduces avoidable churn now and preserves your freedom to scale, replace vendors, introduce new models, or bring work back in-house later without losing control of the system.

Measuring Outcomes and Long-Term Value

Six months after launch, the dashboard can look healthy while the engagement is failing. Tickets are closing. Features are shipping. Then your internal team tries to change a core workflow, swap an AI model, or trace a production incident, and nobody can do it without pulling the vendor back in. That is not long-term value. It is deferred dependence.

Measure engagement by what your organization can now do safely, predictably, and at a reasonable cost. Speed still matters, but only as one input. The fundamental test is whether the outsourced Python team left behind a system your business can operate, extend, and govern.

Measure the outcomes you defined earlier

Return to the business case you approved at the start. Check whether the work improved reliability, reduced manual work, shortened release cycles, cleaned up brittle processes, or made AI features easier to control in production.

I usually review outcomes in three buckets:

| Bucket | Questions to ask |

|---|---|

| Delivery | Did the team ship useful increments on a predictable cadence? Did estimates get more accurate over time? |

| Operational | Can your team observe the system, support it, and change it without unnecessary risk? |

| Business | Did the software improve the process, margin, service level, or customer experience it was supposed to improve? |

This is also the point where weak outsourcing shows up clearly. Code can pass QA and still leave you with hidden coupling, unclear ownership, poor model controls, or a release process that only works when two specific vendor engineers are online.

Look beyond labor savings

Hourly rate comparisons are easy to put in a spreadsheet. They are rarely the full story.

The stronger return usually comes from better architecture decisions, fewer production incidents, cleaner automation, faster experimentation, and less rework across teams. In Python projects, that may show up as lower cloud spend after performance tuning, fewer failures in background jobs, simpler data pipelines, or an AI feature that can be updated through governed configuration instead of a risky code release.

That last point matters more than many first-time buyers expect. If your vendor helps you ship an AI-assisted workflow but leaves prompt changes, model version changes, logging, and human review outside your operating model, you have gained a demo and created an operations problem. If those controls are built in from day one, the project keeps paying back after launch because your team can adjust behavior without losing oversight.

Protect the gains after release

Post-launch value depends on what your team can run without guesswork. Before you close the initial phase, make sure you have:

- Architecture documentation that matches the deployed system, not an outdated proposal

- Runbooks for support, incident response, rollback, and release procedures

- Admin guidance for AI prompts, parameters, logs, and approval paths

- Knowledge transfer sessions recorded for future hires, not only for the current team

- A maintenance plan covering dependencies, model updates, security reviews, and ownership

A good outsourcing partner leaves behind more than working code. They leave behind operating clarity.

That is the difference between a low-cost build and a future-proofed Python platform. The best engagements reduce vendor lock-in, improve your internal decision-making, and give you room to expand AI capabilities under clear governance. If you later scale the partnership, switch vendors, or bring more work in-house, that structure protects the investment.

If you're planning an outsourced Python initiative and want a partner that understands both software delivery and AI modernization, Wonderment Apps is worth a look. Their team helps organizations design, build, and scale modern web and mobile products, and they've also developed an administrative toolkit for AI-enabled apps with prompt versioning, parameter management, unified logging, and token cost control. If your roadmap includes AI integration, it's a smart place to request a demo and see how governance can be built into the product from the start.