Growth is showing up in your pipeline. Your team has ideas. Customers want smoother experiences, faster support, better personalization, and products that work the same way on web and mobile.

But your systems keep saying no.

The catalog lives in one place, customer history in another, reporting in a third, and anything involving automation still depends on someone exporting a CSV on Friday afternoon. Then AI arrives and adds a new layer of promise and confusion. You don’t just need better software. You need a way to modernize the business around it, and you need control over how intelligence gets embedded, monitored, and paid for.

That’s where a digital transformation agency becomes useful. Not as a trendy label, and not as a code factory. A good one helps you rethink how your business works, how your data moves, how your users experience the product, and how newer capabilities like AI fit into a system that still has to be secure, reliable, and maintainable five years from now.

Your Business Is Changing But Your Tech Is Not

A familiar pattern shows up in growing companies.

Revenue opportunities expand, product lines multiply, teams add tools, and customer expectations rise. Meanwhile, the software underneath the business still behaves like it did years ago. It was built for a smaller company, a simpler catalog, a shorter sales cycle, or a world before AI-assisted workflows became practical.

What that looks like in real life

You see it when:

- Marketing wants personalization but customer data is scattered across disconnected systems.

- Operations wants efficiency but core tasks still depend on manual handoffs.

- Product teams want speed but every release touches brittle legacy code.

- Leaders want AI features but nobody has a clean foundation for prompts, data access, logging, or spend control.

In that environment, “build a new app” sounds like progress, but it often adds one more room onto a house with a cracked foundation.

A digital transformation agency starts from a different premise. The primary problem is often not the visible interface. It is the way systems, teams, and decisions connect behind the scenes.

Practical rule: If every improvement request turns into an integration problem, you don’t have a feature gap. You have a transformation problem.

Why the urgency is real

The scale of investment tells you this isn’t a niche concern. The global digital transformation market is projected to grow from USD 1,107.06 billion in 2025 to USD 1,864.94 billion by 2031, at a 9.1% CAGR, according to MarketsandMarkets’ digital transformation market outlook. That projection reflects pressure on organizations to adopt data-driven operations and advanced technologies such as AI.

That doesn’t mean every company needs a dramatic rewrite tomorrow. It does mean standing still gets expensive. Technical debt rarely announces itself with a siren. It shows up as slower launches, awkward workarounds, inconsistent reporting, and teams that are smart enough to know what to do but can’t get the system to cooperate.

Modernization is more than replacement

Sometimes leaders hear “transformation” and picture a giant rip-and-replace program. That’s one reason projects stall before they start.

In practice, strong modernization begins with selective moves:

- Untangle one painful workflow first.

- Create a clean integration layer.

- Replace fragile manual steps with automation.

- Design for future AI use instead of bolting it on later.

If you’re dealing with an aging platform, this guide on how to modernize legacy systems is a useful companion to the broader transformation conversation.

A business can tolerate old code for a surprisingly long time. It can’t tolerate systems that block growth forever.

What a Digital Transformation Agency Does

Many hear “agency” and think branding, campaigns, or a redesign. That’s not what this is.

A digital transformation agency is closer to a combination of architect, builder, and systems planner. It helps you decide what should exist, what should change, what should connect, and what should be retired. Then it helps execute that plan in a way your team can operate.

Consider renovating a busy hotel

If you renovate a hotel, you don’t start by picking lamp shades.

You study guest flow, back-of-house operations, safety, booking systems, room turnover, staffing patterns, and revenue drivers. Then you redesign the property so it works better as a business, not as a prettier building.

The same logic applies to software.

A transformation partner looks at:

- Business processes that are slow, duplicated, or error-prone

- Data flow between teams, tools, and customer touchpoints

- User journeys that create friction

- Platform constraints that make every change harder than it should be

- Governance and delivery so improvements don’t collapse after launch

That’s why this category sits apart from a typical dev shop. A dev shop may build what you ask for. A transformation partner helps you ask better questions first.

The primary job is alignment

The best work happens when technology choices map to business goals.

If your priority is faster quoting, the answer may involve workflow redesign and internal tooling. If your priority is retention, it may involve customer data unification and product experience changes. If your priority is AI-assisted operations, the challenge may be less about model selection and more about reliable data access, auditability, and operational controls.

A useful outside reference on how firms frame digital transformation consulting services can help clarify the distinction between high-level advice and end-to-end execution.

A strong partner doesn’t lead with a favorite stack. They lead with your bottlenecks.

What they do that internal teams often can’t

Internal teams know the business. That matters. But they are pulled into day-to-day delivery, support, and stakeholder pressure. It’s hard to redesign the plane while you’re flying it.

A transformation agency can add:

| Role in the engagement | What it means in practice |

|---|---|

| Strategic diagnosis | Identifying where systems and workflows block growth |

| Cross-functional planning | Aligning product, engineering, operations, and leadership |

| Execution capacity | Providing design, engineering, QA, and delivery management |

| Modern architecture guidance | Structuring systems for scale, integrations, and future AI use |

| User-centered design | Making sure the result works for customers and staff, not for IT |

For a deeper strategic framing, this article on what is digital transformation strategy is worth reading.

The important point is simple. A digital transformation agency shouldn’t deliver software. It should help your organization become better at change.

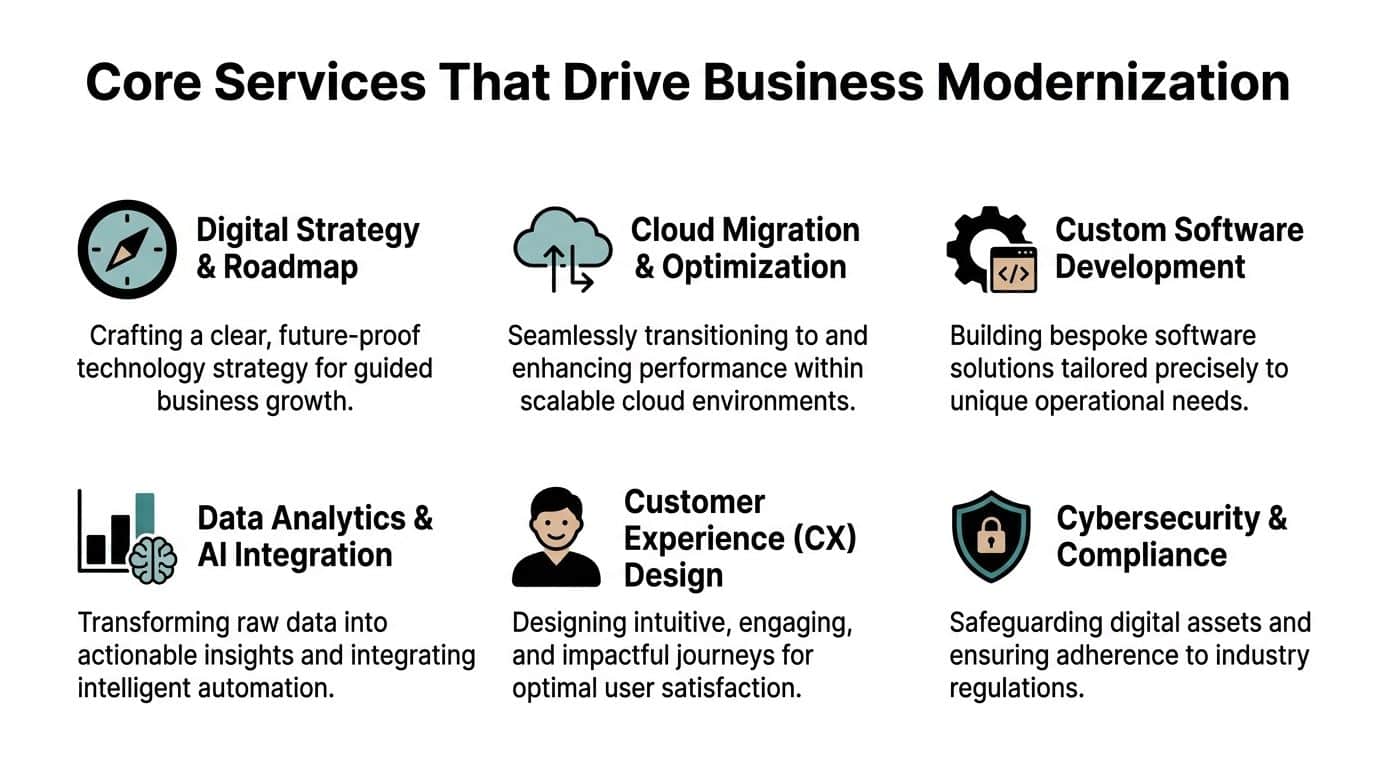

Core Services That Drive Business Modernization

A lot of companies ask for a new platform when the underlying need is a better operating model.

That distinction matters. Business modernization is not one project with one launch date. It is a set of connected services that remove friction from how the company sells, serves, decides, and scales. A strong digital transformation agency brings the specialists to do that work and the operating tools to keep complexity under control after launch, including the newer discipline many teams now miss: managing AI usage, prompt quality, and model spend before they drift into a hidden cost center.

Digital strategy and roadmap

This is usually the service clients underestimate.

A roadmap is the blueprint before the renovation starts. If you skip it, teams replace visible systems while leaving core bottlenecks untouched. The result is expensive activity that changes less than expected.

A useful roadmap connects business goals to technical choices and answers questions such as:

- Which systems are slowing revenue, service, or delivery

- Which dependencies create release delays

- Which processes should be redesigned before they are automated

- Which requirements around security, compliance, and reporting need to shape the architecture from the start

Good strategy also helps leadership sequence the work. Some systems should be replaced. Some should be integrated. Some should stay in place until the surrounding process is stable enough to justify change.

Cloud migration and infrastructure modernization

Cloud work is often misunderstood as a hosting decision. It is really an operating decision.

Modern infrastructure gives teams a safer way to release changes, recover from failures, and scale during traffic spikes without treating every deployment like a high-risk event. It also creates the conditions for better analytics and for AI features that need reliable access to data, services, and compute.

A typical engagement includes environment design, deployment pipelines, performance tuning, resilience planning, and clearer separation between development, staging, and production. That sounds technical because it is. The business outcome is simpler to understand. Releases become more predictable, outages become less disruptive, and new product ideas become easier to test.

Data foundations and AI readiness

Many AI projects fail for the same reason a new dashboard fails. The underlying data is inconsistent, incomplete, or trapped in too many places.

A transformation agency fixes the plumbing before promising intelligence. That work can include event tracking, data models, analytics pipelines, internal APIs, governance rules, and access controls. Without those pieces, teams get prototypes that look impressive in a demo and disappoint in production.

This is also where the partner model starts to matter more. Building AI features is one challenge. Governing them is another. As companies move from a single chatbot to multiple AI-assisted workflows, they need a way to control prompts, monitor usage patterns, and manage cost by team, feature, or customer path. That is no longer a niche concern. It is becoming part of core modernization.

Key takeaway: AI value often follows disciplined data design, clear controls, and active cost management.

Custom software and app delivery

Strategy becomes operational here.

A digital transformation agency may build customer-facing products, internal tools, partner portals, mobile apps, or workflow systems. The point is not to write custom code for prestige. The point is to fit software to the way your business creates value.

Three qualities matter here:

Release speed

Teams need delivery practices that support frequent change without lowering quality.Clear user experience

Frustrating software often reflects a messy process underneath it. Good product design reduces that burden for customers and staff.Architecture that can grow

The first version should solve today’s problem without blocking future channels, integrations, or AI capabilities.

Integrations and modular architecture

Modern businesses rarely run on a single system. They run on a chain of systems that need to exchange data reliably.

That is why modular architecture matters. MIT Sloan describes the value of turning a company’s strongest capabilities into reusable digital services in its discussion of reusable “crown jewel” services. In practical terms, that means your pricing logic, fulfillment rules, identity layer, or recommendation engine should be usable across products and channels instead of buried inside one aging platform.

Modularity helps companies:

- Reduce rework across teams

- Launch new features without rewriting the whole platform

- Connect with third-party tools through APIs

- Prepare systems for automation and embedded AI

A simple analogy helps here. A modular system works like a set of well-labeled building blocks. A monolith works more like poured concrete. Both can hold weight, but only one is easy to reshape when the business changes.

Observability, reliability, and managed support

A platform that launches well can still fail six months later if nobody can see what is happening inside it.

Observability covers logs, metrics, tracing, alerting, and incident response. Reliability practices make sure the service stays available as usage grows and changes are released. Managed support adds the human layer: people who own the health of the system after the build phase ends.

This matters even more once AI enters the stack. Teams need visibility into more than uptime. They need visibility into prompt performance, model failures, latency, token consumption, and cost behavior. If those controls are missing, an AI feature can become expensive or unreliable before leadership realizes there is a problem.

A genuine transformation partner plans for operation, not delivery. That includes the team structure, the engineering discipline, and the tooling needed to manage modern complexity over time.

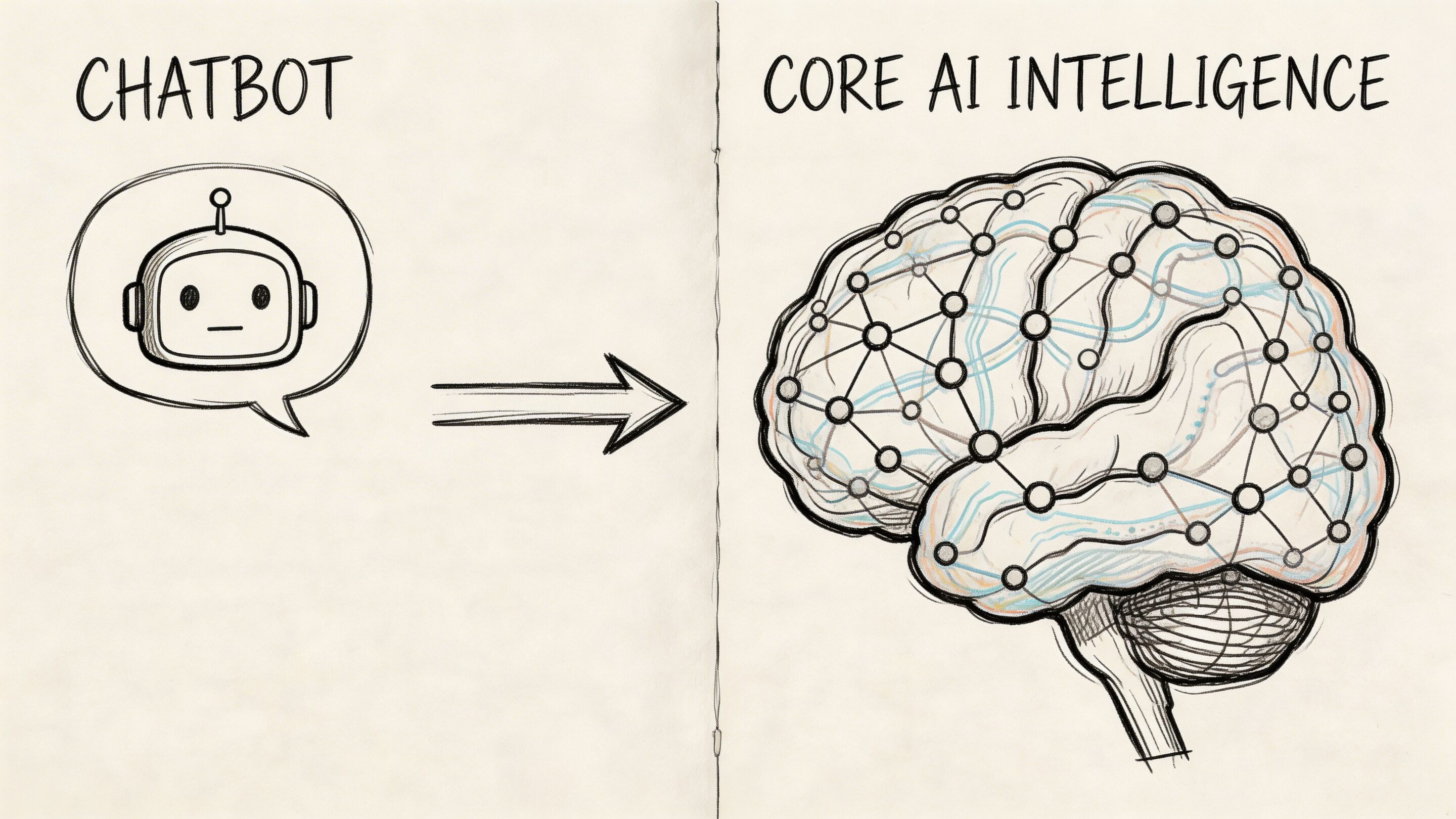

The AI Modernization Difference From Chatbot to Core Intelligence

Many companies say they’ve “done AI” because they added a chatbot to the website.

That may be useful, but it’s not transformation.

AI modernization happens when intelligence gets embedded into the product, workflow, and operating model. The software starts helping teams make decisions, automate repetitive work, surface risk, personalize experiences, and handle complexity at a scale that manual processes can’t support.

Surface AI versus embedded AI

Here's a comparison:

| Type | What it does | Limitation |

|---|---|---|

| Surface AI | Adds a visible feature like chat, summarization, or basic search | Useful, but often disconnected from core operations |

| Embedded AI | Improves decision-making and execution inside the product or workflow | Requires better architecture, data, controls, and integration work |

An ecommerce brand might use embedded AI to tailor recommendations inside the buying journey. A fintech app might apply anomaly detection to flag suspicious behavior in real time. A healthcare workflow might use AI to help route scheduling, paperwork, or intake triage more intelligently.

In each case, the value comes from fitting AI into the work itself.

Why this moved to the top of the agenda

In 2025, over 95% of firms are investing in AI solutions, and generative AI agents are reported to cut modernization timelines by 40-50%. That same source notes that 41% of companies report enhanced customer experiences with GenAI, and 40% report higher productivity, according to Coherent Solutions’ roundup of digital transformation trends.

Those numbers matter because they change the conversation from “should we experiment?” to “where should this sit in the operating model?”

Where leaders often get confused

The common misunderstanding is assuming AI is mainly a model-selection exercise.

It is often not.

The harder questions are things like:

- What data can the model access

- How are prompts managed and versioned

- What rules govern outputs

- How do you log model behavior

- How do you control token usage and spending

- What happens when the underlying workflow changes

If those questions don’t have clear answers, the AI feature may look clever in a demo and still become hard to trust in production.

AI modernization works best when teams treat prompts, model settings, logs, and cost controls as product infrastructure, not side notes.

Durable AI beats novelty AI

Business leaders don’t need AI that impresses people for two weeks. They need AI that holds up under policy changes, product updates, edge cases, compliance reviews, and budget scrutiny.

That’s why a strong digital transformation agency doesn’t stop at “we can plug in a model.” It designs the surrounding system so the intelligence is observable, governable, and adaptable.

A chatbot answers questions. Core intelligence changes how the business performs.

How to Choose Your Partner A Practical RFP Checklist

A polished deck doesn’t tell you whether a partner can handle transformation.

The better test is how they think. Do they begin with user needs, operational constraints, and business goals, or do they jump straight to platforms, frameworks, and architecture diagrams?

That distinction matters because 70% of digital transformation initiatives fail to achieve their goals, and one recurring reason is the technology-first paradox, where organizations rush to deploy solutions before clarifying user needs and equity concerns, as discussed in this analysis of digital transformation challenges.

Questions that reveal the truth

An RFP should do more than compare rates. It should expose how a partner makes decisions under uncertainty.

Ask questions like these:

- How do you define the problem before proposing a solution?

- What user research or discovery work do you require before build starts?

- How do you decide whether to modernize, integrate, or replace a legacy system?

- What does your architecture look like for apps that need future AI capabilities?

- How do you handle adoption, training, and post-launch support?

- What observability and reliability practices are included by default?

- Who will be on the team, and how involved are senior people?

A weak partner gets impatient with these questions. A strong one welcomes them.

Digital Transformation Agency Vetting Checklist

| Vetting Category | Key Question to Ask | What a Good Answer Sounds Like |

|---|---|---|

| Discovery process | How do you validate the problem? | “We start with stakeholder interviews, workflow review, user research, and system mapping before recommending architecture.” |

| Business alignment | How do you connect delivery to business outcomes? | “We define success in operational, customer, and product terms, not only technical milestones.” |

| Legacy modernization | How do you decide what stays and what goes? | “We assess risk, dependency, cost of change, and business criticality before recommending replacement.” |

| UX capability | How do you include end users in the process? | “We test assumptions with users early, especially where workflows are complex or high stakes.” |

| AI readiness | How do you design for future AI integration? | “We plan data flows, permissions, logging, prompt controls, and governance, not just model access.” |

| Engineering quality | How do you manage reliability after launch? | “We include monitoring, alerting, support ownership, and release practices that keep the system stable.” |

| Change management | What happens after deployment? | “We support rollout planning, training, handoff, and feedback loops so adoption doesn’t stall.” |

| Team structure | Who is doing the work? | “You’ll meet the delivery leads, and responsibilities are clear across product, design, engineering, and QA.” |

Red flags worth taking seriously

Some warning signs are subtle.

A partner may sound advanced while still being a poor fit if they:

Lead with a favorite technology

If the first answer is “you need Platform X,” they may be selling inventory, not solving your problem.

Skip user research

Teams that dismiss discovery rebuild the same broken process in a newer interface.

Treat change management as your problem

Adoption isn’t a side quest. It’s part of the work.

Avoid discussing support

If nobody owns performance, logs, reliability, and iteration after launch, your team will inherit the mess.

“Tell me how you learn before you build” is often a more revealing question than “What stack do you use?”

What good proposals tend to include

Strong proposals usually feel concrete rather than theatrical.

Look for:

- A phased plan instead of one giant leap

- Named assumptions and risks instead of glossy certainty

- Specific team roles rather than generic “resources”

- A governance model for decisions, escalation, and reporting

- A view of success that includes users, operations, and maintainability

Choosing a digital transformation agency is less like hiring a contractor for one task and more like choosing a climbing partner for a difficult route. Skill matters. Trust matters more.

Engagement Models and Unpacking the Price Tag

Leaders often ask for “the cost” of digital transformation as if it were a single SKU.

It isn’t. The price depends on what you’re changing, how much uncertainty exists, how much of the team you need externally, and whether the work ends at launch or continues through optimization and support.

The three common engagement models

Fixed-scope project

This works best when the problem is well-defined.

A company may already know it needs a platform migration, a mobile app rebuild, or a specific internal system replacement. In those cases, a fixed scope can create clarity around deliverables, timeline, and ownership.

Best fit when:

- Requirements are relatively stable

- Dependencies are understood

- Success criteria are clear

Tradeoff: if major unknowns appear, the model can become rigid.

Retainer or managed services

This model suits ongoing evolution.

Transformation rarely ends with launch. Teams need support, reliability work, UX iteration, analytics improvements, and occasional architecture changes. A retainer gives continuity and keeps momentum alive after the first release.

Best fit when:

- The product will continue to evolve

- You need a standing team

- You want strategic oversight plus execution

Tradeoff: it requires a longer planning horizon and stronger internal prioritization.

Team augmentation

Sometimes the issue isn’t direction. It’s capacity.

If you already have product leadership and a roadmap but need extra React, .NET, Java, iOS, Android, QA, product, or UX capability, staff augmentation can make sense. This model plugs specialists into your team without handing over the whole initiative.

Best fit when:

- Your internal leadership is strong

- You need role-specific help

- You want control over day-to-day priorities

Tradeoff: coordination stays largely on your side.

For leaders comparing these options, this breakdown of staff augmentation vs managed services is a practical next read.

What drives cost

The main variables are usually:

| Cost driver | Why it matters |

|---|---|

| Project complexity | More systems, dependencies, and compliance needs create more planning and engineering effort |

| Team composition | Senior product, architecture, design, QA, and delivery roles add value and cost |

| Timeline pressure | Faster delivery means a larger team or more coordination overhead |

| Legacy constraints | Older platforms create unknowns, integration work, and testing burden |

| Ongoing support needs | Monitoring, reliability, updates, and optimization continue after launch |

If part of your initiative includes agentic workflows, budgeting gets more nuanced because AI work can involve model orchestration, prompt tuning, logging, and usage controls. This guide on the cost to build an AI agent is helpful as a supplementary budgeting reference.

A simple way to choose

Use fixed scope when the destination is clear.

Use managed services when you’re building a capability, not just shipping a deliverable.

Use augmentation when your plan is sound but your bench is thin.

The biggest pricing mistake isn’t choosing the most expensive option. It’s choosing the cheapest engagement model for a problem that is still poorly defined.

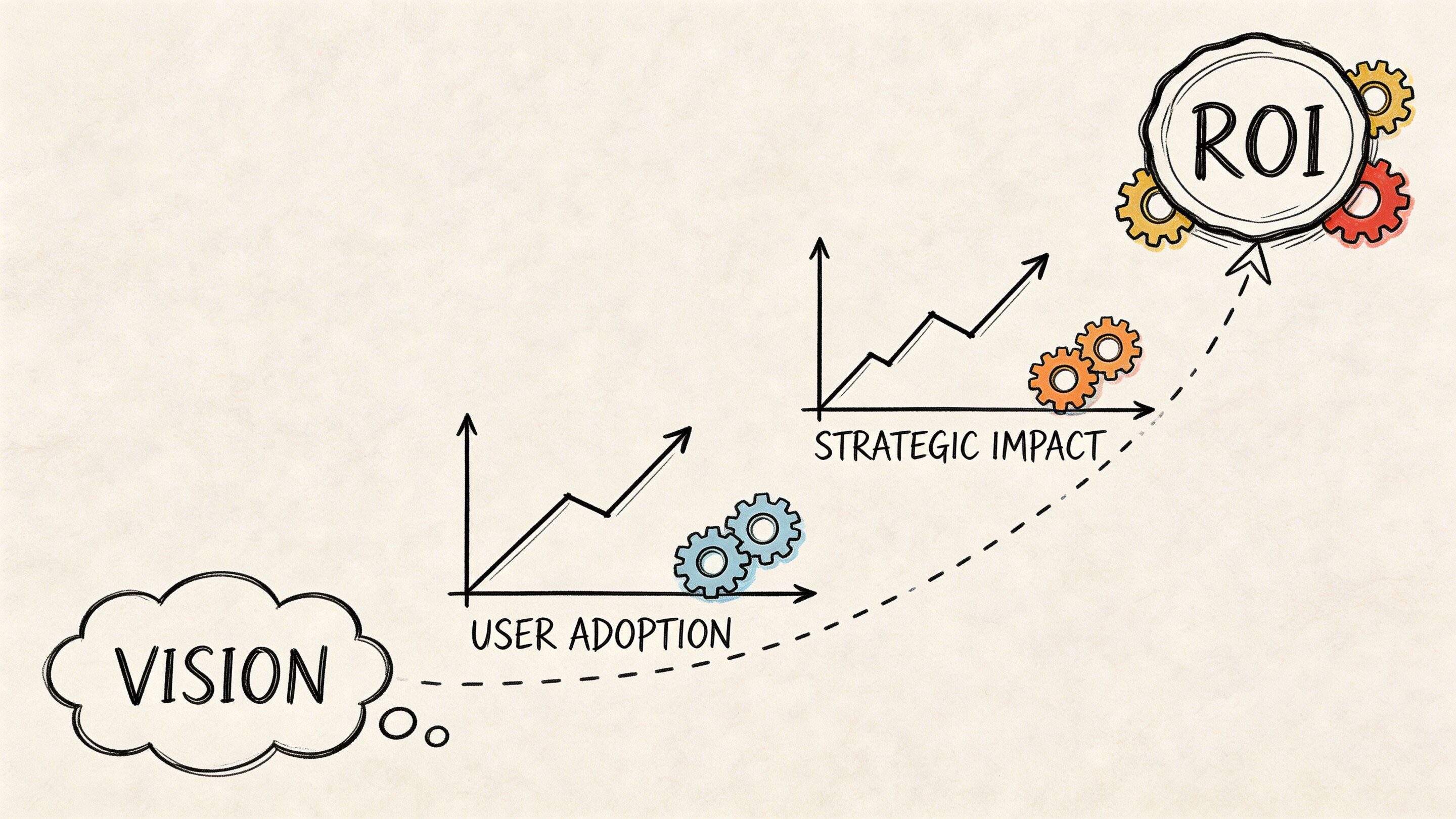

From Vision to ROI Bringing Your Transformation to Life

A leadership team approves a transformation program. The new platform ships. The launch email goes out. Six months later, adoption is uneven, teams still rely on spreadsheets, AI usage costs are hard to explain, and every change request feels slower than expected.

That gap between launch and business value is where transformation efforts succeed or stall.

A useful way to judge results is to treat the release as the starting line, not the finish line. Software creates return when people change behavior, teams work faster with fewer errors, and the business gains a system it can improve without rebuilding from scratch.

What to measure besides launch

ROI gets clearer when leadership looks at outcomes that continue after go-live:

Adoption

Are customers, employees, or partners using the new workflow in daily work?

Operational efficiency

Has manual effort dropped? Have error-prone handoffs decreased? Are teams spending less time working around the system?

Product velocity

Can your team ship improvements faster, with fewer regressions and less coordination drag?

Customer impact

Is the experience easier to use, more consistent, and more relevant across channels?

Reliability

Does the system hold up under real demand, with clear visibility when something breaks?

Those outcomes usually depend on foundations that executives do not see in a demo. Clean integrations, dependable infrastructure, clear observability, sound security, and disciplined delivery practices determine whether a product gets easier to improve over time or turns into a patchwork of exceptions. As noted earlier, organizations that skip those foundations often struggle to modernize legacy systems and extend them for AI.

Where AI changes the ROI equation

AI changes the return model because it introduces a second layer of operations.

A normal product feature needs code, testing, analytics, and maintenance. An AI-enabled feature needs all of that, plus prompt control, model selection, data permissions, output review, logging, and spending oversight. The first version can look impressive in a sprint review. Running it responsibly in production is the harder assignment.

A practical comparison helps here. Traditional software behaves like a machine built from fixed parts. AI behaves more like a team member who needs instructions, guardrails, supervision, and a record of what changed. If you cannot track the instructions or the cost of each interaction, performance becomes difficult to improve and even harder to govern.

A mature AI-enabled product usually needs discipline in four areas:

Prompt versioning

Teams need a record of which prompt changed, who changed it, and how the output shifted after the change.

Controlled parameter access

AI features often rely on internal business data. Access rules should be explicit and auditable.

Unified logging

When responses are inconsistent, teams need one place to inspect prompts, parameters, outputs, and failures across integrations.

Cost visibility

Usage spend can rise unnoticed unless product and finance teams can monitor it at the feature level.

This is one of the clearest ways a strong digital transformation agency differs from a typical delivery vendor. The right partner does not supply developers to ship AI features. It brings the operating model, governance, and tooling needed to keep those features useful, safe, and economically sound after release.

The long view matters

The true test is simple. After the project, is your business easier to change?

Your software should support the next product decision with less friction. Your data should be easier to use. Your internal teams should have better visibility into what the system and the AI layer are doing. A new channel, workflow, or AI capability should feel like an extension of the foundation, not a fresh round of chaos.

That is what genuine transformation delivers. It leaves you with stronger business mechanics, not newer screens.

If you’re planning AI modernization and want tighter control over how it runs in production, Wonderment Apps can help. Alongside product strategy, engineering, and UX delivery, Wonderment has developed a prompt management system designed for teams integrating AI into existing apps and software. It includes a prompt vault with versioning, a parameter manager for internal database access, unified logging across integrated AI tools, and a cost manager that helps entrepreneurs track cumulative spend. If you’re serious about building software that lasts, request a demo and see how the operational side of AI can become far easier to manage.